Opinion: Nvidia's Max-Q — Maximum efficiency, minimum performance?

Prolific and unapologetically honest computer-enthusiast Christian Reverand (a.k.a. D2Ultima) and editor Douglas Black write about the facts behind the marketing hype of Nvidia's Max-Q initiative.

Change Log:

5/7/17 - Original version published

9/7/17 - Article reworked

12/7/17 - Added TDP/clock efficiency graph of GTX 1070 (notebook) in "An alternative method?" section

17/7/17 - Changed reference to the Max-Q cards as cards "configured with Max-Q", added notes of clarification for performance, pricing, and voltage/frequency curve

Note: We would like to state that we have no problems with users who want thinner notebooks or thinner notebooks in themselves once they are engineered and cooled properly; indeed, we think such efficiency targets are impressive. Instead, our issues are with the way Max-Q has been handled in terms of marketing, price-to-performance ratio, and that we speculate a better direction could have been taken to achieve the same end for the consumer.

Additionally, many of the community who have known me believe that my article has been censored. There was a lot removed from the first publication, but it has since been re-worked to be more detailed and to supplant my statements with facts. I would like to state that there is nothing written in this article that has not had my ok to be here, and nothing was removed or changed that I did not directly agree with. This is what I consider to be what I wanted to say, and anything changed I have found or been provided good reason to change that I agree with - D2ultima

Christian Reverand

I've been in the enthusiast notebook community since 2009, getting heavily into it around 2011. Computers, technology, gaming and livestreaming are my passions, and I always strive to improve what I know and correct myself on what I don't. I'm a fair person all around, and I always praise what I see as good and call out what I see as bad; credit where it's due and none where it's not.

A glimmer of hope

When Pascal for mobile dropped in August of 2016, much ado was made about how "mobile" GPUs were dead. This was because, for the first time in the company’s history, Nvidia had dropped the "M" suffix from their mobile GPUs to highlight the fact that the specifications of their mobile cards were equal to those of their desktop counterparts — a GTX 1080 was a GTX 1080 regardless of where you found it. It was a striking move in marketing (and engineering) that made it appear that the days of the desktop GPU being unrivaled were fading away; we thought were on the verge of a new era of notebook computers that could compete with (or even exceed) their desktop cousins! And then came Max-Q.

At Computex this year, Nvidia released a variant line of Pascal mobile GPUs, called the “Max-Q” cards. In their presentation, Nvidia posted a lot of graphs claiming they found the optimal part of the curve where performance meets power efficiency, and as a result of focusing on efficiency per watt, it allows ODMs (Original Design Manufacturers, the people that OEMs like Alienware and iBuyPower purchase their notebooks from before rebranding to be “Alienwares” or “Chimeras”) to produce very thin machines with essentially a negligible loss of performance compared to their thicker notebook brethren. These Max-Q notebooks will, of course, lack some features such as multiple storage slots and removable RAM sticks — but such sacrifices are inevitable when you are getting the performance of a GTX 1080 in an incredibly slim package such as the Asus ROG Zephyrus, and most users have no qualms with the tradeoffs. So what’s the issue at hand? The issue is that you’re paying for a GTX 1080 but not getting the performance of a GTX 1080.

New name, same game?

First, a little history on the last generations of Nvidia notebook GPUs that had an “M” suffix: The GTX 680M was a desktop GTX 670, core for core with the same memory bus width, but heavily underclocked, and with low voltage memory. The GTX 680MX, 780M, and 880M cards were full-cored GK104 like the 680/770 cards, just downclocked. The 970M was a 960 OEM variant with a downclock (as you can see with the link provided, this 960 OEM variant had 1280 cores and a 192-bit memory bus and was GM204, not GM206, and held 3GB or very rarely 6GB of vRAM just like the 970M did). The 580M and 675M were full-cored GF114 like the 560Ti, only with a downclock. The GTX 860M (Maxwell; there is a Kepler variant that I am specifically not referring to) and 960M cards were GTX 750Ti cards. The 965M GM206 was a downclocked GTX 960, so on and so forth; there are more I have not listed. The exact naming scheme between desktop card and laptop card was different, but mobile cards having the same “basic” specifications as desktop cards is not new.

But, with the launch of the GTX 1000 (a.k.a Pascal) series for notebooks as mentioned above, Nvidia sought to provide similar performance to the desktop cards (within 10 percent, to be specific, as these articles from Eurogamer and Ars Technica quote). While Nvidia’s lofty claims were not always attained, they certainly did attain them more than enough to be credible. Clockspeeds were raised significantly compared to previous generations of notebook GPUs, and even though the “base” clock speeds are still lower on 1000 (notebook) cards compared to 1000 (desktop) cards, the boost speeds match their desktop counterparts (my 1080 (notebook) for example will happily boost to 1911MHz if I keep it cool enough, without overclocking, despite being rated for only 1771MHz boost).

Nvidia's GPUs designed with Max-Q, however, have thrown that out of the window — and as far as we are able to tell, Nvidia have not changed their prices to suit. Recently, YouTube channel Digital Foundry provided an excellent video testing the newly launched ASUS Zephyrus notebook and its GTX 1080 (notebook) Max-Q against 1080 (desktop) and 1070 (desktop) cards. Their tests confirmed what I had predicted when I first heard about these cards: the 1080 (notebook) Max-Q now functions like a 1070 (notebook) does in a proper, well-cooled machine like a Clevo P650HS or a MSI GT62VR. The kicker is that Nvidia’s 1080 (notebook) Max-Q card is priced the same as a normal GTX 1080 (notebook) while only delivering the performance of a 1070 card.

While the Max-Q cards DO contain more “complete” matches in specifications (specifically the VRAM types and clockspeeds, as well as no realistic slider limit for overclocking) and as such are not exactly the same as the previous “M” line of GPUs from Nvidia, the bottom line is this: The MAX-Q performance reduction is reminiscent of Nvidia’s previous mobile cards that matched the basic specifications of desktop cards (as I listed many examples of above) before Pascal created relative equality between mobile and desktop form-factors in terms of performance and overclocking allowance (I say allowance because, while the slider is unlocked, power limits are still very low on Max-Q, and overvolting cannot be performed on stock Pascal Notebook cards; since every single mobile Pascal card is power-limited, especially at 4K where more power is drawn even at lower framerates, overclocking is not nearly the same experience as on the desktop cards).

But it isn’t the performance I primarily have issue with; it’s the marketing.

But it isn’t the performance I primarily have issue with; it’s the marketing. Nvidia claims on their website that the 1080 (notebook) Max-Q is capable of providing 3.3x the performance of a high-end 2014 laptop with a GTX 880M. So far, no 1080 (notebook) Max-Q laptops tested have achieved this level of performance in benchmarks such as 3DMark’s Firestrike (referenced in the table below). They also have not made it, in my opinion, abundantly clear that the 1080 (notebook) Max-Q cards will not provide performance close enough to other, non-Max-Q, properly cooled 1080 cards in performance, be they desktop or laptop cards, to distinguish them from a 1070’s performance. In fact, graphs such as this one suggest otherwise. This graph, while not including a zeroed start point, clearly has a sharp incline at the beginning; the end-point where a “small gain” is claimed is not representative of the reduction in performance, with an average of 20% less compared to other 1080 (notebook) units in 3DMark 11 Performance, Firestrike, and Time Spy graphic scores according to notebookcheck’s standard 1080 (notebook) Max-Q and 1080 (notebook) scores, but reductions as little as 11% (3DMark Firestrike graphics, ASUS Zephyrus compared to Eurocom Tornado F5 Killer Edition) and as large as 26% (3DMark Firestrike graphics notebookcheck standard 1080 (notebook) Max-Q compared to Asus G701VIK-BA049T). By looking at Nvidia's graph, it appears as if there is only a marginal or even negligible performance decrease versus the units with higher power limits, but thus-far, it is proving not to be so. I’m not going to discount the engineering marvels that get such performance into such a tiny form factor without any real operating or overheating issues, but I can’t give the marketing a free pass for that.

The secret ingredient

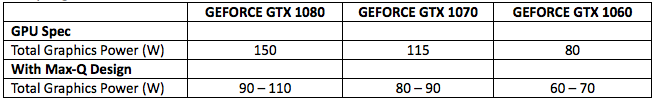

Nvidia is tight-lipped on official details of what Max-Q cards are, but they have mentioned it’s a combination of hardware, software, and laptop design tweaks. Still, we can figure out the basics by looking at the performance and specifications of the cards. Going back to Digital Foundry’s benchmarks comparisons between the 1080 (notebook) Max-Q and the 1080 and 1070 (desktop) cards, the reasons cards configured with Max-Q perform so badly in comparison is because they have a severely reduced TDP limit (as in how many watts they can pull, not estimated heat output), reduced clockspeeds (both base and boost), and possibly reduced voltage (though this point is a benefit — more on this later) compared to the non-Max-Q 1000 (notebook) series cards.

So what exactly is this TDP power limit? Put simply, it’s the amount of watts of power that the GPU is capable of drawing for use. The 1080 (desktop) cards range from 180W (Nvdia reference) to reportedly 300W (EVGA FTW Hybrid) no power limit (ASUS Strix official, publicly-available XOC vBIOS), and 1080 (notebook) cards range from 150W (Razer Blade Pro, Eurocom Tornado F5 Killer Edition) to 220W (Asus ROG G701VIK, G800, GX800), with the majority of 1080 (notebook) cards having around 180W for limits (Alienware 17, MSI GT83VR SLI, Acer Predator 17x and 21x SLI, Aorus X7 DT v6, etc). This was, I assume, the greatest hurdle for Max-Q to overcome.

| Laptop GTX 1080 | GTX 1080 Max-Q | Desktop GTX 1080 Founders Edition |

|

| Base Clock (MHz) | 1556 | 1101 - 1290 | 1607 |

| Boost Clock (MHz) | 1733 | 1278 - 1468 | 1733 |

| TGP (Total Graphics Power, W) | 150 - 200 | 90 - 110 | 180 |

As the table above shows, the 1080 (notebook) Max-Q cards have a power limit range between 90W and 110W, depending on the ODM’s desires. The reason for this is that even if the card is incredibly cool with a well-designed cooling system, dispelling 180W of TDP (heat) is different from dispelling 110W of TDP (heat). Since Max-Q is focusing on machines having a very small form factor and being very quiet (targeting 40dB maximum noise while gaming), power consumption had to be reduced. There is no fighting the laws of thermodynamics, and the cooling systems simply cannot be overloaded if they wish to keep the advertised targets of noise without the notebooks overheating. In addition to this, the base and boost clocks also vary with the ODM, going all the way down to 1101MHz at the base clock in the weakest Max-Q card (also seen in the table above). Now, the problem with this is shoving a 1080 down to as low as 110W (I’m charitably ignoring the 90W card’s existence to make this point) is that the technology used to get it down there is not anything really special. Dropping clockspeeds vastly improves efficiency, certainly, but so does reducing voltage used — more on this later.

Note that I am not disregarding the other parts of the package that is “Max-Q” such as the acoustic targeting, but rather simply stating that reducing the power draw of the card by reducing voltages and/or clock speeds is not anything worth writing home about, especially if the cards are not binned (carefully selected) for extra efficiency.

So, what’s the problem with some lower performance in a thin machine? “You always sacrifice some performance for portability”, one might object — and I would usually agree; there is indeed nothing inherently bad about it. But the answer is that they still command the same prices that their fully-powered brethren do. Now, while Nvidia does not sell notebook GPUs to any consumer directly, instead providing OEMs with the tools to implement their cards in their notebook systems, and Nvidia and OEMs do not release pricing for their notebook GPUs directly, there are some outlets which sell cards as upgrades for MXM systems. Taking these as a sort of reference point, a GTX 1080 (notebook) can be estimated at roughly US$1200, while a GTX 1070 (notebook) is approximately US$750 by the same method. Exact prices change with each OEM, so this is not a concrete list, but a 1080 (notebook), Max-Q or not, is usually at least US$400 more than a 1070 (notebook) almost everywhere on the market where one can choose between both cards in the same notebook. So with assumption via the only pricing data an end-user can acquire, we have a roughly US$1200 notebook GPU that functions like a US$380 desktop GPU: the GTX 1070. And this is only considering the Nvidia reference specification clockspeeds and cooling design, because AIB-partner GTX 1070 cards like the ASUS Strix or the EVGA FTW editions are not much more expensive than reference ones — and easily surpass the reference card’s performance by a nice margin. Below is a table comparing futuremark benchmark performance of GTX 1080s and 1070s across both desktops and notebooks:

Now, the idea that comparing a desktop GPU to a notebook GPU is a bad idea has been put forth to me because, as Nvidia says, laptops are an integrated system; especially after no more reference GeForce MXM-B designs have been put forth and Intel’s push for all mobile CPUs to be soldered in 2014. But I still would like to bring up the pricing difference in general, because there is a consistent trend of notebook parts costing significantly more than desktop parts while being incomparably worse. This article’s point is to focus on the pricing of the 1080 (notebook) Max-Q parts in particular, as it is my personal opinion that there is a limit on how much can be charged for a product with reduced performance. The above paragraph essentially means that even the best 1080 (notebook) Max-Q cards will pale in comparison to a GPU that is a third of its price.

On the other hand, overclocking is enabled on the Max-Q models, and tests from at least one user prove there is some improved performance to be gained due to low voltages, but low power limits are still a problem, and it also breaks the “cool and quiet” benefits of the ASUS Zephyrus in particular. I have been informed that Max-Q design has no temperature limit and is designed to operate under 40dB at all times, but some OEMs can implement a feature which ramps up the fans if the GPU crosses a certain temperature threshold, enabled by a certain setting in the software of the laptop. For ASUS’ feature, cards may hit up to 75c, after which the fans will ramp up further to keep the video card cool, breaking the 40dB noise limit. This is also referenced in notebookcheck’s own review of the ASUS Zephyrus.

An alternative method?

This suggests that Nvidia's TGPs (tabulated earlier) are not the real points where adding more power provides very little benefit, and certainly not enough to keep performance reduction negligible from a non-Max-Q variant of the card.

So I have some speculations on ways that the 1080 (notebook) Max-Q’s current state could have been achieved without needing to use (and charge for) a 1080. Let’s step back for a moment and take a look at the GTX 1070 (notebook). This card is not the desktop card in notebook form; nay, it is actually a bit better by its specifications: The 1070 (notebook) cards have 2048 cores, as opposed to the 1070 (desktop) cards. At the same time, they have a relatively low power limit (around 115W) and as such, get power-limited very quickly and do not hold high boost clocks when the cards are stressed. As referenced by another excellent video by Digital Foundry, however, the reduced clockspeeds are not of much concern when compared to their desktop brethren because the larger core count renders the effective performance the same, even at low boost speeds. This allows the 1070 (notebook) to generally compete with its desktop counterpart in performance.

If the heat dissipation of 110W of power as a maximum was the aim of Max-Q, then the 115W 1070 (notebook) cards have pretty much already achieved this. In fact, a lot of the more enthusiast users of notebook forums have taken to using MSI Afterburner to reduce the voltage curve of the GPUs (a lot of ASUS forum users in particular), resulting in higher boost clocks and much lower temperatures. Some of the 1070 (notebook) card owners have opted to lock their core clocks to 1750MHz maximum boost and use anywhere between 0.825v and 0.875v for that frequency range. Temperatures are reportedly down and their cards hold the 1750MHz boost frequency almost 24/7 under load — something that did not happen when the card was left stock, due to power, temperature, or voltage regulation throttling. This makes sense since voltage is directly related to both heat and power consumption, and 10-series (notebook) cards generally use up to 1.063v under stock operating conditions (usually sitting around 1.05v on average when stressed). Reducing voltage reduces the power consumption for the same performance as the power limit “stretches” further and the cards can clock higher. The gap in voltage between 0.875v and 1.05v is extremely large and is a testament to the efficiency the existing, non-Max-Q 1000 (notebook) cards are capable of when configured in such a way.

Please do note, however, that there is no way for an end-user to truly set a specific voltage on any Pascal GPUs, and that there is a downside to this method of voltage curve tweaking. Lowering the voltage also reduces stability, and a balance should be found. Not every user can simply run 1800MHz at 0.8v and call it a day as each card is different, but from all the information I have been able to find, a smarter voltage curve can provide a HUGE benefit to efficiency, reducing temperatures while improving performance. In fact, one particular user has been able to ballpark the 1080 (notebook) Max-Q’s 3DMark Firestrike graphics card score while only using 100W on purpose from his regular, non-Max-Q 1070 (notebook) card, simply by using the same voltage reduction method. Another enthusiast was able to get his clockspeeds all the way up to 1974MHz without even crossing 1v used, owing testament once again to the efficiency that the existing non-Max-Q cards are capable of.

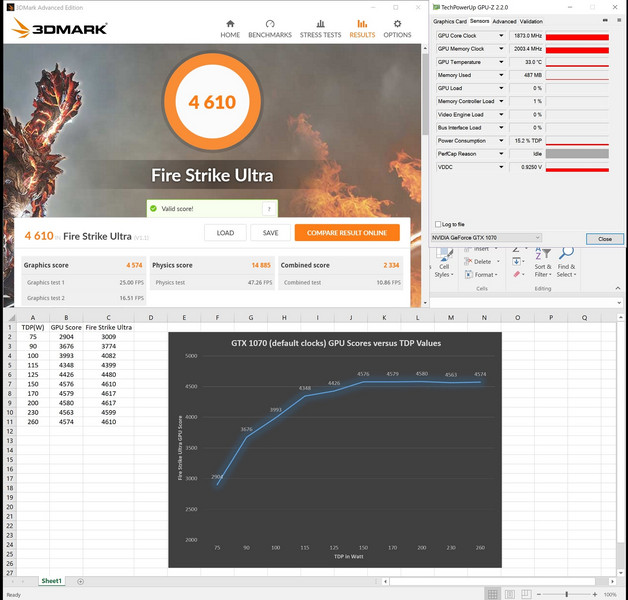

To further prove this possibility, a fellow enthusiast ran some tests by locking the clockspeed of his 1070 (notebook), limiting the power draw limits in stepped segments. The table below proves that the efficiency breakpoint of 110-115W is for the 1070 (notebook) with an upper limit of 150W for the stock boost frequency (note that it does NOT indicate power drawn per test, just the limit set via software). Therefore, a 1080 (notebook) almost certainly has a higher actual breakpoint than the 110W the Max-Q is capped at, but the 110W breakpoint is actually a perfectly good fit for the 1070 (notebook) with a proper voltage frequency curve.

This suggests that Nvidia's TGPs (tabulated earlier) are not the real points where adding more power provides very little benefit (and certainly not enough to keep performance reduction negligible from a non-Max-Q variant of the card).

So what does this all mean? Well, my speculation is if Nvidia wished it, they could create a video BIOS (hereafter referred to as vBIOS) that adjusts the default voltage frequency range of the existing cards to be lower while keeping the power limits somewhat high and get the same (or better) result as the 1080 (notebook) Max-Q with a 1070 (notebook) card, alongside an updated one for the higher performance notebooks which as far as I know OEMs should be able to deploy to end-users (like how Alienware deployed the 150W → 180W update to end users for their 1080 (notebook) cards midway through the life cycle of the Alienware 17 R4), improving performance for all users. This would likely be with a 100W TDP limit and a voltage frequency maximum of about 0.95v and an ever so slight clockspeed reduction (maybe 100MHz) for Max-Q, and a voltage curve that one that extends further into overclocking frequencies for stability. This would produce a 1070 (notebook) boosting well into the high 1700MHz to low 1800MHz ranges almost constantly (depending on temperature) for Max-Q, and mid-to-high 1800MHz ranges or higher for the Max-P cards, while running significantly cooler for all users. There would be minimal performance differences between stock 1070 (notebook) cards and their Max-Q variants this way, but with better overclocking potential on the so-called Max-P cards. This, I feel, would be more in-line with similar pricing, pushing the difference between the card variants to simply be more about the platform solution the end-user wants.

So considering the 1070 (notebook) Max-Q cards in the above scenario would do what the 1080 (notebook) Max-Q cards currently do (or maybe a little bit better, once combined with the other technologies that the Max-Q line of cards employ), this would open the way for similar adjustments to said 1080 (notebook) Max-Q cards; possibly creating one with a 115-125W limit, and similarly negligible performance changes to the Max-P variants. The existing prices, while still high in general, would be more justified. And as I see it, a return to the performance differences between stock “M” cards of the past versus their desktop counterparts would be completely gone. As far as I could see, everyone would benefit. Maybe some notebooks might need to be a little thicker; 21mm thick or so (a mere 2mm thicker than some current Max-Q laptops) could handle the higher wattage 1080 (notebook) Max-Q cards.

(Proper) thermal engineering

From a consumer and marketing standpoint, what seems impressive about Max-Q is that — power-limited or not — they’ve got a 1080 into such a small form factor without overheating. Let me start off by saying that the engineering achievements to do this are nothing short of phenomenal: the vapor chamber contact points on their heatsinks and other techniques, like the ASUS Zephyrus opening up the bottom when you open the lid to provide more airflow, are fantastic — but it is also what it looks like when an ODM actually really cares about cooling. In my opinion, it’s what high-end Pascal mobile laptops (all of them, thick or thin) should have been engineered with in the very beginning.

Vapor chambers, for example, make a huge difference in the ability of any heatsink to handle heat. They are a special kind of technology for a heatsink that is designed to pull heat away from the contact point as efficiently as possible. To quote Cooler Master:

“Vapor Chambers provide far superior thermal performance than traditional solid metal Heat spreaders at reduced weight and height. Thermal spread resistance is almost neglectable and thermal resistance is x times lower than (refer to copper, carbon nanotube compound, nano diamond compound). Vapor Chambers enable higher component heat densities and TDPs at safe operating temperatures, extending the components and products life.”

Without getting too technical, it essentially makes heatsinks work a lot better because they are capable of transferring larger amount of heat per surface area. As a result, thermally dense contact points are easily dealt with because the more efficiently heat gets pulled away to be dumped out of your system, the better your cooling is. It is not, however, a one-stop success guarantee: a relatively tiny vapor chamber heatsink with a sub-par cooling system design in the rest of the notebook, like the one in the Razer Blade Pro 1080 (notebook) models, is far inferior to a larger, well-designed, non-vapor-chamber heatsink with a good cooling system design like the ones in MSI’s GT73VR 7RF or the Alienware 17 R4 notebooks.

A downside (for the ODMs) wishing to use a vapor chamber is that the engineering design must be very precise — improper heatsink contact is not uncommon in laptops, and techniques such as “lapping” (using fine sandpaper to grind down, flatten, and polish a warped or improper heatsink for improved contact and thus heat transfer) is impossible to perform on a vapor chamber — the vapor contact point must be extremely thin and will thus be worn away by lapping; the heatsink will be ruined. Such precision is usually expensive, and laptops with that level of attention are clearly cream of the crop, but if such impressive and precise cooling technology was applied to the “larger” notebooks with the level of precision and attention that is present in these Max-Q notebooks there would be size and noise reductions to be had — likely even performance benefits too. Imagine shaving a solid 5-10mm off of many larger notebooks currently on the market without losing performance at all.

It is difficult for me to understand why Max-Q units have such stringent cooling requirements for reduced performance compared to their non-Max-Q counterparts yet the rest of the pascal line do not. Instead, all of the innovation in cooling design is spent trying to push what is essentially an overpriced 1070 (in performance) into very thin notebooks, just with the selling point of being called “GTX 1080”, sort of like a Razer Blade Pro that doesn’t overheat. Further to this, why have the efficiency optimizations not been expanded to the rest of the pascal notebook line? Even the brand new GeForce MX150 is still using the old paths in the driver optimized for performance instead of efficiency. It’s really a shame for consumers that optimizing a 1070 (and by extension, the rest of the cards) wasn’t the direction Nvidia and the respective ODMs and OEMs pursued. This one I cannot place on Nvidia alone, as they insist that a lot of work was done with each OEM to achieve what is now Max-Q, as seen in the statement below, referenced from another notebookcheck article delving into Max-Q’s whisper mode:

Statement Nvidia, 28.6.2017: "We are not binning. Pricing will depend on the OEM and the design. A laptop is an integrated system and pricing depends on a wide variety of factors. Max-Q is a new approach to building the best thin, light and powerful laptops, and has required extended work from NVIDIA and the OEMs in R&D."

The above statement mentions that they do not bin Max-Q notebook cores for efficiency, which means that the normal cards are used. This further reinforces my speculations about achieving the same result with the 1070 (notebook) cards as laid out above. This is my pro-consumer viewpoint, and I know this. I understand the business aspects of the ordeal, but I just do not agree with the way that the 1080 (notebook) Max-Q is being marketed, and the price it is sold for compared to the performance it brings.

Conclusion: What’s in a name?

All in all, from my perspective this appears to be largely a gimmick for sales to customers — the work for cooling is there, I will never in my life discount this — but why wasn't it there before? Why are the 1080 Max-Q cards still called “GTX 1080” despite every known example performing like a GTX 1070? An average of 1400MHz on a 1080 (notebook) Max-Q is not a “slight” drop in performance for efficiency compared to a properly engineered laptop with a GTX 1080; it is a huge drop, approaching a 30 percent reduction in performance but for the same price! And, more importantly for marketing, for the same name.

The name is so important that it makes or breaks the average consumer’s perception of a card. Take the 780M, for example. It was a fantastic mobile card that overclocked very well — I personally got one running at 1110MHz without any crashing in games (with an unlocked vBIOS that is; voltage control and a +137MHz overclock limit was in place without it). That is a full-boost reference GTX 680, in a laptop! But at the end of the day, it just wasn’t a “780” to the average user, and explaining it is meant to be a reduced-performance 680/770 was usually a waste of time; mentioning overclocking resulted in jokes and memes about fires and ovens.

The 1080 (notebook) cards, however, are exactly what they are named to be. But even the folks at Digital Foundry asked — with such a large drop in performance, should this Max-Q GPU be called GTX 1080 or a GTX 1070? Now, I do not agree with naming it a 1070, but rather something to the effect of a “1080M” or even “1080MQ” to signify the somewhat drastic performance reduction would be much more ideal. It should be noted that the vBIOS returns that all of the Max-Q cards are “<insert name> with Max-Q Design”, as read by GPU-Z and Futuremark, and that on all websites retailing the cards the same naming system is included, but I personally don’t think this is just enough at point-of-sale, since the basic name is the same, but I will conclude that one cannot be mistaken about which is which.

If Max-Q is considered wholly to be a platform solution then this is even more reason why it should have a more differentiating naming system because it is clearly not meant to be the same as the existing cards. I also feel the price should reflect its performance, as it does not feel right to name and charge for a namesake if the performance is not there. The core count, ROP count, TMU count, memory bus width, memory speed, memory size, memory type — whatever you can think of that isn’t the power limit and clock speeds — are there, but I still cannot justify the price and marketing methods thus far. This is doubly true when you consider that the consumer’s perception that a 10-series notebook card is just like its desktop counterpart has already been solidified this generation. The “GTX 1080 with Max-Q Design” is still a “1080”, and to the mass public, this is unfortunately all they need to hear.

As I’ve laid out, there is at least one clear possible alternative to the direction taken to provide Max-Q cards, and it would have (in my opinion) benefited the end-user more — especially in terms of price. Is the 1080 (notebook) Max-Q really the best solution? That’s up to the end user to decide; I cannot and will not make such a decision for them. But I don’t think it is — at least not for the current price and way it is marketed.

I’ve been in the notebook enthusiast community for a long time and I’ve seen many things come and go. I think we pay more than enough for reduced performance and limited hardware already, and I consider this naming and pricing scheme deceptive, with emphasis on the pricing part. What do you think?