Opinion: It's time we talked about throttling in reviews

The elephant in the room

It's time to talk about the elephant in the room (or laptop chassis): throttling. To many, this editorial is going to be a long time coming. For these enthusiasts, any laptop that doesn't maintain its stock clocks under load is a hunk of scrap. Still, for many who just want a machine that works reasonably well and have lowered expectations for mobile computing, they may say that expecting such high standards of performance in a thin laptop is foolish. My point to make isn't about what performance standards we should expect from notebooks in general, but that there is a level of due diligence reviewers (ourselves included) should rise to in order to prevent consumers from being misled. We (reviewers) need to sufficiently test these machines and present the data to the consumer so they can be properly informed on their purchases.

Defining "throttling"

Before we get into what is throttling, we need to establish something: all Intel CPUs, desktop or laptop are designed to hit their full turbo boost clock once there is sufficient load on the processor (though in practice this is dependent on thermal limits). The actual turbo boost multiplier will usually vary depending on the nature of the load on the processor. For example, the i7-6700HQ has a base clock of 2.6GHz and a rated boost clock of 3.5GHz. But this 3.5GHz is only to be used when a single core is being stressed and is thus entirely useless under most forms of workloads. For two or three CPU cores being loaded, the turbo boost drops to 3.3GHz. For four cores being loaded, the turbo boost drops to 3.1GHz. Since a lot of games use four cores (and any DirectX 11 title on a Nvidia GPU forces CPU load to four cores with varying degrees of efficiency), this means your average turbo boost will be 3.1GHz in many sustained-load scenarios — though lighter tasks often make sure of the higher frequency of a single-core load. Unfortunately, a major problem is that the specifications and marketing for a laptop always advertise their Turbo Boost frequencies, even though they are not engineered to maintain (or sometimes hit) them in actuality.

I've established that a CPU should hold its turbo boost under load or it is considered throttling, but what is throttling, anyway? As you might have guessed, it is the intentional reduction of the speed of a processor due to some limiting factor in the system (generally power limit, current, or, most commonly, thermal). Note that when a CPU is at idle it will reduce its clockspeed, but the system — and myself — do not consider it throttling: while the clocks indeed drop at idle, the CPU does not return a throttling flag in this state (you can check using Intel's own XTU or one of many other CPU monitoring utilities). Generally, the throttling seen by sustained loads is thermal, but some laptops, such as Dell's XPS 15 9560 and its business workstation equivalent, the Precision 5520, are known to experience power limit throttling — likely as a result of insufficient cooling of their voltage regulator modules (VRMs).

Case studies

I've been thinking about writing an editorial similar to this for a while, but it was our recent Surface Pro review and subsequent news piece that finally moved me to action. It isn't the Surface Pro itself; there have been many, many laptops over the years that were victims of significant throttling that many reviewers failed to mention. Most reviewers don't do sustained load testing, and even fewer do sustained testing with a mixed CPU and GPU load, so it's no wonder that this issue is not so often touched on by media. This section will introduce just a few recent examples to make my point that we need to test these laptops more rigorously — now more than ever.

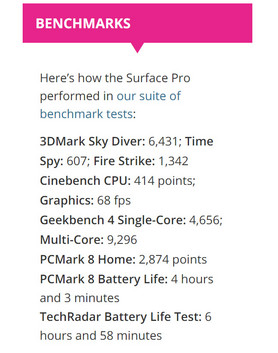

Like many reviewers, we use Cinebench (R15) CPU Multi 64-Bit as a benchmark. However, we began using it in a 30-minute loop last year in order to detect throttling issues. If throttling is detected in our tests, its Performance sub-rating and, consequentially, total rating, will be reduced accordingly. Each score of the benchmark is then plotted on a graph over time, resulting the graphs you will see below.

Please note: while I will link some reviews directly, my point here isn't to name and shame any outlets in particular; I simply want to highlight that further benchmarking has become necessary. After all, oversights happen, and even Notebookcheck has let some throttling laptops through its tests without note in the past. I will be referencing our Cinebench loop test frequently, but it is certainly not the only type of test that can be looped for sustained loads.

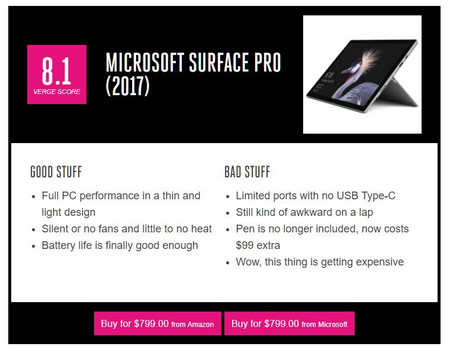

Microsoft Surface Pro

Let's start with the Surface Pro. The Verge praised the new Surface Pro for "full PC performance in a thin and light design". TechRadar awarded the Surface Pro the distinction "Recommended" at 4.5 out of 5 stars, and even listed off a fair assortment of benchmark scores, including Cinebench. Digital Trends rated the Surface Pro 9.0 out of 10, citing "excellent notebook performance in a tablet format". None of these outlets mentioned a performance drop-off because they likely didn't test for one.

In the first chart, you can see a clear drop-off from around 340 to 225 — a 40 percent drop in performance for the passively cooled i5 (note that this refers to the U-series, not 4.5W Y-series) model.

The costlier Core i7-7660U SKU utilizes active cooling and thus sees a smaller drop from 410 to 335 (of about 20 percent). Thus, the final score of the i7 ends up being the same as the initial performance of the Core i5 model — performance the i5 can't maintain due to lacking a fan.

To be clear, we still gave the Surface Pro an extremely high score of 90 percent despite this throttling. Still, the issue was highlighted in the review as well as a subsequent news article. The important thing in my mind is that the consumer is at least made aware of the significant performance drop-off for each SKU, and it will be up to them to determine if the machine will meet their needs or not — something that is less and less determinable by looking at specs alone due to confusing naming schemes for components and passive cooling.

MSI GS73VR 7RF

The GS73VR 7RF received mixed reviews, but mostly on account of its build quality. PC Mag panned the GS73VR for its materials and price, giving it 2 out of 5 stars. Still, it turned in winning scores on their Handbrake, Cinebench, and Photoshop benchmarks. PC Gamer ended up recommending the GS73VR 6RF due to its strong gaming benchmark performance, while CNET gave it a luke-warm 7.5 out of 10, mostly dinging its price and build quality.

Performance-wise, things looked good for in our GS73VR review until around 20 minutes in at which point its score plummeted from 720 to 504 — a 36 percent performance drop, which is probably something the enthusiast gamer who would purchase a 17.3-inch gaming laptop would notice.

MSI GS63VR 7RF

The GS63VR has seen positive reviews from most sites, including Overclock3d, PCWorld, UltrabookReview (who I might proudly add is one of the few to mention the hot temperatures), and TheTechy.

Indeed, it is a fine laptop by most accounts. However, our tests showed a near instantaneous drop of roughly 12.5 percent, with the notebook only achieving its high score of 740 the first run. After two more runs, the score stayed pinned at a hair under 660.

Given the demographic such a thin and light performance-focused machine targets, I (again) suggest that noting any issues of sustaining clocks under load would be particularly appropriate for a review.

Dell Precision 5520

The Precision 5520 is the business workstation twin of the XPS 9560. Focused on packing the power of a mobile workstation for professionals into a slim package, the Precision 5520 was praised by Digital Trends for its "strong performance thanks to a Xeon quad-core CPU and Nvidia Quadro GPU", Laptop Mag for its "fast performance", and Windows Central for "top for this class" performance. None of these reviews appeared to have conducted sustained load benchmarks, however.

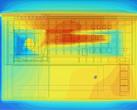

Because the M5520 appears to use the same chassis and mainboard of the XPS 15 (the 9550, specifically), we can expect some of the same pitfalls identified with the design — namely VRM throttling. In this series, the throttling tends occurs under sustained heavy or mixed loads with the core i7 and above CPUs when the VRM gets too hot to supply enough power to the CPU (they lose efficiency at higher temperatures). This doesn't seem to affect many Core i3 or i5 models, but it certainly seemed to affect our Precision 5520 with its high-end E3-1505 Xeon. With it's 3.0-4.0 GHz Xeon CPU, our Precision 5520 started out strong with a score of 710, but we soon found that the score yo-yoed between 650 and 460.

That's a 42.5 percent drop from its peak performance, and a drop that a perspective buyer of such a workstation would probably encounter in daily workflow.

HP EliteBook 725 G4

Just to make it clear we aren't picking on Intel here, the HP EliteBook 725 G4, powered by AMD's Bristol Ridge PRO A12-9800B APU at 2.7 GHz, is also an example of a laptop that loses significant performance under sustained load. Our Cinebench loop again reveals a 10 percent drop in performance after just a few runs. In this case, however, this particular model of notebook was not too popular with reviewers, and I wasn't able to find third-party reviews of it.

[Performance] is less and less determinable by looking at specs alone due to confusing naming schemes for components and insufficient cooling.

Keep in mind that these are just a handful of examples from our review database. They are a few examples from recent reviews we have conducted, but it is only a small fraction of the total number of laptops which have significantly decreasing performance under load. As laptops are made thinner, lighter, and quieter than ever, this problem has been happening with more and more regularity.

The solution

I'm not saying that everyone needs to mimic our testing methodology or loop Cinebench, but this is a problem that needs to be addressed somehow with benchmarks. Nearly any demanding benchmark or game that taxes CPU and GPU will give sufficient data after running it for a while. It isn't particularly hard, either: simply running HWiNFO in the background with CPU and GPU sensors on should give you all the information you need to determine if throttling is occurring. And if it occurs, it's your duty as media to note this fact. Leave it up to the consumer to determine whether or not they want to spend their hard-earned money on the product as is.

The consequences

What's the worst thing that could happen if we all agreed to test new laptops under more exacting circumstances? Yes, it takes us a bit more time. Yes, perhaps OEMs might lose out on revenue from tricking consumers as a result of putting components that can't run as advertised into their products, but I am sure they'd also get less returns and angry customers who, in their own opinions, didn't get what was advertised. Perhaps manufacturers will start providing their computers with properly engineered cooling solutions appropriate for the included hardware. Finally, testing for CPU throttling also will open the door for reviewers to test for GPU throttling. Either way, I can't see the increased availability of information as a bad thing — whether or not the consumer ultimately makes use of it.