Stanford Computational Imaging Lab engineers have developed a prototype for lightweight, AR glasses, opening the door to future, holographic AR glasses that are significantly lighter than currently available headsets. An AI-driven display that projects a 3D image without using bulky lenses through two metasurfaces within a thin optical waveguide is the key to this innovation.

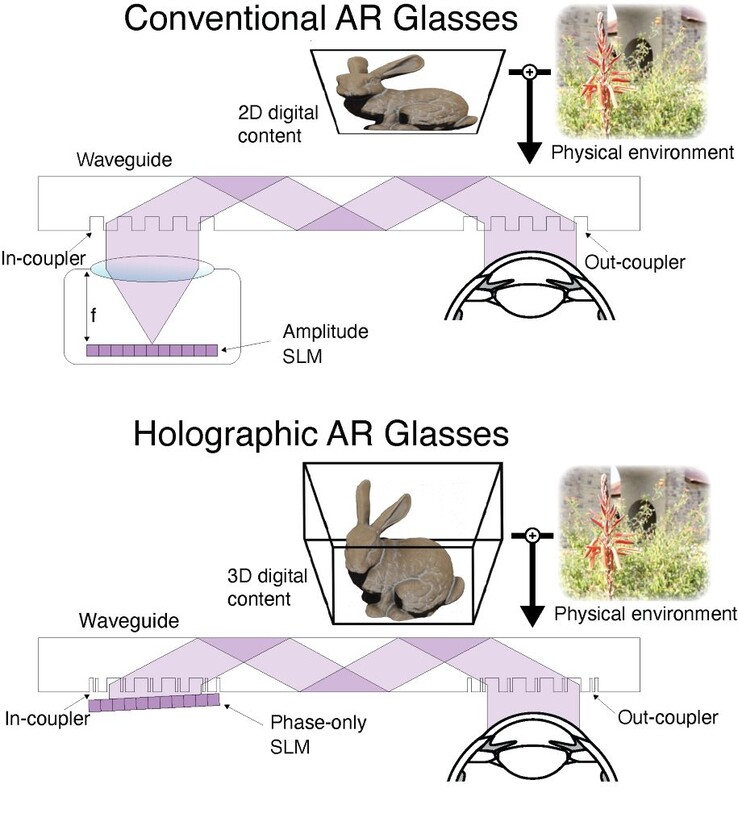

Conventional AR/VR/XR headsets typically use focusing lenses to project micro LED or OLED display images into the wearer’s eyes. Unfortunately, the depth required for lenses leads to bulky designs, such as smartphones in Google Cardboard devices or the Apple Vision Pro headsets weighing over 21 ounces (600 grams).

Thinner designs sometimes use an optical waveguide (think of it like a periscope) to move the display and lenses from in front of the eyes to the side of the head, but limit the user to 2D images and text. Stanford engineers have combined AI technology with metasurface waveguides to lower its AR headset weight and bulk while projecting a 3D holographic image.

The first innovation is the elimination of bulky focusing lenses. Instead, ultrafine metasurfaces etched into the waveguide ‘encode’, then ‘decode’ a projected image by bending and aligning light. Very roughly, think of it as splashing the water at one end of a pool according to a set rhythm, and when the waves reach the other end, you can read the waves to recreate the original rhythm. The Stanford glasses use one metasurface in front of the display and another in front of the eye.

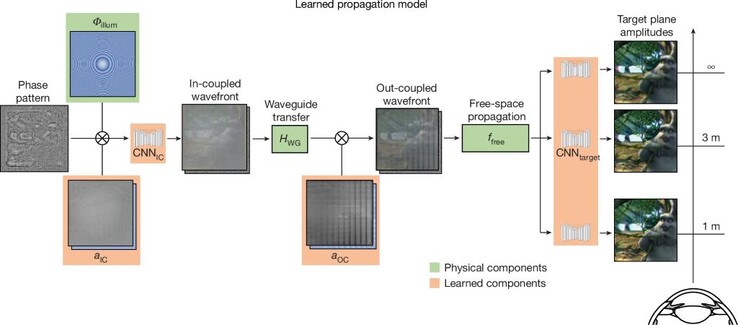

The second is a waveguide propagation model to simulate how light bounces through the waveguide to create holographic images accurately. 3D holographs depend greatly on the accuracy of light transmission, and even nanometer variations in the surfaces of the waveguide can greatly alter the seen holographic image. Here, deep-learning, convolution neural networks using a modified UNet architecture are trained on red, green, and blue light sent through the waveguide to compensate for optical aberrations in the system. Roughly, think of it as shooting an arrow aimed at the bull’s eye, but it hits just a tiny bit to the right – now you know to compensate by aiming a tiny bit to the left.

The third is the use of an AI neural network to create holographic images. A 48 GB Nvidia RTX A6000 was used to train the AI on a broad range of phase patterns projected from the Holoeye Leto-3 phase-only SLM display module. Over time, the AI learned what patterns could create specific images at four distances (1 m, 1.5 m, 3 m, and infinity).

Taken together, the AI model powering this headset outputs significantly better 3D images than the alternatives. Although the Stanford AR glasses are a prototype, readers can still enjoy the augmented world today with a lightweight headset like this on Amazon.