Nvidia CEO Jensen Huang has revealed the company’s latest AI products and services during the keynote at GTC 2024 on March 18, 2024 in San Jose, CA. Targeted to enterprise customers, the new Nvidia offerings promise to greatly accelerate AI learning and application to many fields such as robotics, weather forecasting, drug discovery, and warehouse automation. The Nvidia Blackwell GPU, NIMs, NEMO, and AI foundry were among the notable offerings unveiled.

The AI training process is much more computer intensive than the use of an AI model because millions of input documents must be processed. This can take weeks, even months on the very fastest supercomputers available today for companies like Microsoft. Nvidia previously released the Hopper GPU platform in 2022 to handle such large compute loads.

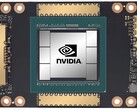

At GTC 2024, Huang unveiled Blackwell as the follow-up to Hopper and is available on a drop-in board replacement for current Hopper installations. The Blackwell GPU consists of dual-104 billion transistor GPU dies on a TSMC 4NP process for 20 FP4 petaflops with 192GB of HBM3e memory capable of transferring 8TB/s. In comparison, a desktop 4090 GPU has a single 76.3 billion transistor GPU, 5NM process, up to 1.32 Tensor FP8 petaflops of performance with 24GB of GDDR6X memory. If one doubles the 4090 FP8 number to adjust to 4-bits, that’s roughly 20 petaflops Blackwell vs 3 petaflops 4090.

When 72 Blackwell GPUs are racked in a single DGX GB200 NVL72 cabinet coupled with liquid cooling, improved NVLink CPUs, and other interconnect improvements, performance over Hopper cabinets jumps by 22x for FP8 AI training and 45x for FP4 AI inference. Blackwell AI training power consumption is also reduced approximately 4x versus Hopper.

NIMS pre-packaged AI models

To take advantage of this leap in performance, Nvidia introduced pre-packaged AI models called NIMS (Nvidia Inference Microservice), utilizing Kubernetes to run on Nvidia CUDA GPUs locally or on the cloud. Access to the stand-alone NIMS is through a simplified HUMAN API. The goal behind this is to create a future where AI services are created by asking an AI to create an app with certain features, then the AI mixes and matches various NIMS together without requiring low-level programming. Finally to assist in the training of NIMS, Nvidia introduced NeMO Microservices to customize, evaluate, and guardrail the training process on corporate documents.

Nvidia BioNeMO and biological NIMS

Huang announced that Nvidia will be developing NIMS trained on biological and medical data to provide researchers with easier access to AI that can improve all aspects of medicine, such as finding drug candidates faster.

Thor ASIL-D and BYD autonomous EV vehicles

Significantly, Huang stated that BYD will be the very first EV car maker in the world to adopt their new Thor ASIL-D computer utilizing an AI SoC to process visual and driving input to provide high safety in autonomous driving. Coupled with the recent announcement of SuperDrive by Plus, this suggests BYD will be one of the first automobile companies to release a Level 4 autonomous EV vehicle.

Nvidia Project Groot humanoid robots

Huang further demonstrated their advances in robotics by showcasing the abilities of their robots, which are first trained in the Omniverse as a digital twin, then allowed to complete tasks in real-life robotic bodies. Baking, finger twirling of sticks, product sorting and assembly, and navigating around obstacles were shown. To achieve this level of robotics, the Nvidia Project Groot AI was created by first training it on text, video, and demonstration inputs, then further refined with actual observations of tasks being done. Coupled with the same Thor computer used in vehicles and Nvidia Tokkio AI language model, demonstration robots were able to observe actions done by humans, then replicate them to make drinks, play the drums, and respond to spoken requests.

Nvidia Omniverse Cloud adds Apple Vision Pro support

Of minor note, Huang stated the Nvidia Omniverse Cloud now streams to the Apple Vision Pro headset in addition to the Meta Quest and HTC Vive Pro, clearly for developers who are utilizing Nvidia cloud GPUs since no Macintosh has a compatible Nvidia GPU.

Readers wanting to join the AI revolution will want a powerful Nvidia graphics card (like this at Amazon) to develop AI skills and apps.

Source(s)

NVIDIA Launches Blackwell-Powered DGX SuperPOD for Generative AI Supercomputing at Trillion-Parameter Scale

Scales to Tens of Thousands of Grace Blackwell Superchips Using Most Advanced NVIDIA Networking, NVIDIA Full-Stack AI Software, and Storage Features up to 576 Blackwell GPUs Connected as One With NVIDIA NVLink NVIDIA System Experts Speed Deployment for Immediate AI Infrastructure

March 18, 2024

GTC—NVIDIA today announced its next-generation AI supercomputer — the NVIDIA DGX SuperPOD™ powered by NVIDIA GB200 Grace Blackwell Superchips — for processing trillion-parameter models with constant uptime for superscale generative AI training and inference workloads.

Featuring a new, highly efficient, liquid-cooled rack-scale architecture, the new DGX SuperPOD is built with NVIDIA DGX™ GB200 systems and provides 11.5 exaflops of AI supercomputing at FP4 precision and 240 terabytes of fast memory — scaling to more with additional racks.

Each DGX GB200 system features 36 NVIDIA GB200 Superchips — which include 36 NVIDIA Grace CPUs and 72 NVIDIA Blackwell GPUs — connected as one supercomputer via fifth-generation NVIDIA NVLink®. GB200 Superchips deliver up to a 30x performance increase compared to the NVIDIA H100 Tensor Core GPU for large language model inference workloads.

“NVIDIA DGX AI supercomputers are the factories of the AI industrial revolution,” said Jensen Huang, founder and CEO of NVIDIA. “The new DGX SuperPOD combines the latest advancements in NVIDIA accelerated computing, networking and software to enable every company, industry and country to refine and generate their own AI.”

The Grace Blackwell-powered DGX SuperPOD features eight or more DGX GB200 systems and can scale to tens of thousands of GB200 Superchips connected via NVIDIA Quantum InfiniBand. For a massive shared memory space to power next-generation AI models, customers can deploy a configuration that connects the 576 Blackwell GPUs in eight DGX GB200 systems connected via NVLink.

New Rack-Scale DGX SuperPOD Architecture for Era of Generative AI

The new DGX SuperPOD with DGX GB200 systems features a unified compute fabric. In addition to fifth-generation NVIDIA NVLink, the fabric includes NVIDIA BlueField®-3 DPUs and will support NVIDIA Quantum-X800 InfiniBand networking, announced separately today. This architecture provides up to 1,800 gigabytes per second of bandwidth to each GPU in the platform.

Additionally, fourth-generation NVIDIA Scalable Hierarchical Aggregation and Reduction Protocol (SHARP)™ technology provides 14.4 teraflops of In-Network Computing, a 4x increase in the next-generation DGX SuperPOD architecture compared to the prior generation.

Turnkey Architecture Pairs With Advanced Software for Unprecedented Uptime

The new DGX SuperPOD is a complete, data-center-scale AI supercomputer that integrates with high-performance storage from NVIDIA-certified partners to meet the demands of generative AI workloads. Each is built, cabled and tested in the factory to dramatically speed deployment at customer data centers.

The Grace Blackwell-powered DGX SuperPOD features intelligent predictive-management capabilities to continuously monitor thousands of data points across hardware and software to predict and intercept sources of downtime and inefficiency — saving time, energy and computing costs.

The software can identify areas of concern and plan for maintenance, flexibly adjust compute resources, and automatically save and resume jobs to prevent downtime, even without system administrators present.

If the software detects that a replacement component is needed, the cluster will activate standby capacity to ensure work finishes in time. Any required hardware replacements can be scheduled to avoid unplanned downtime.

NVIDIA DGX B200 Systems Advance AI Supercomputing for Industries

NVIDIA also unveiled the NVIDIA DGX B200 system, a unified AI supercomputing platform for AI model training, fine-tuning and inference.

DGX B200 is the sixth generation of air-cooled, traditional rack-mounted DGX designs used by industries worldwide. The new Blackwell architecture DGX B200 system includes eight NVIDIA Blackwell GPUs and two 5th Gen Intel® Xeon® processors. Customers can also build DGX SuperPOD using DGX B200 systems to create AI Centers of Excellence that can power the work of large teams of developers running many different jobs.

DGX B200 systems include the FP4 precision feature in the new Blackwell architecture, providing up to 144 petaflops of AI performance, a massive 1.4TB of GPU memory and 64TB/s of memory bandwidth. This delivers 15x faster real-time inference for trillion-parameter models over the previous generation.

DGX B200 systems include advanced networking with eight NVIDIA ConnectX™-7 NICs and two BlueField-3 DPUs. These provide up to 400 gigabits per second bandwidth per connection — delivering fast AI performance with NVIDIA Quantum-2 InfiniBand and NVIDIA Spectrum™-X Ethernet networking platforms.

Software and Expert Support to Scale Production AI

All NVIDIA DGX platforms include NVIDIA AI Enterprise software for enterprise-grade development and deployment. DGX customers can accelerate their work with the pretrained NVIDIA foundation models, frameworks, toolkits and new NVIDIA NIM microservices included in the software platform.

NVIDIA DGX experts and select NVIDIA partners certified to support DGX platforms assist customers throughout every step of deployment, so they can quickly move AI into production. Once systems are operational, DGX experts continue to support customers in optimizing their AI pipelines and infrastructure.

Availability

NVIDIA DGX SuperPOD with DGX GB200 and DGX B200 systems are expected to be available later this year from NVIDIA’s global partners.

For more information, watch a replay of the GTC keynote or visit the NVIDIA booth at GTC, held at the San Jose Convention Center through March 21.