Stable Diffusion is a foundational AI model that "encodes a huge amount (1 billion parameters or more) of knowledge about speech and visuals" in order to generate a photorealistic image based on any given text-based description in about 100 seconds or less. Accordingly, it is a cloud-based resource - well, usually, that is. Qualcomm's AI Research group claims to have got it working on a mobile device based on the new flagship Snapdragon 8 Gen 2 processor.

The company's vice-president of engineering, Dr. Jilei Hou, asserts that this is possible thanks to advancements such as the Qualcomm AI Stack, AI Model Efficiency Toolkit (AIMET) and AI Engine, which allowed the group to take a conventional version of Stable Diffusion in the FP32 data format and convert it "through quantization, compilation, and hardware acceleration" into a 'compact' INT8-based version for the 8 Gen 2's Hexagon Processor.

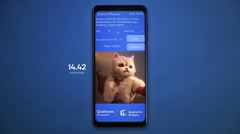

This reportedly allowed the reference smartphone to use its new model to represent the input "Super cute fluffy cat warrior in armor, photorealistic, 4K, ultra detailed, vray rendering, unreal engine" in (subjectively) accurate image form over 20 inference-steps in 14.42 seconds - the fastest on a smartphone to date, and "comparable to cloud latency" (according to Qualcomm, at least).

Furthermore, the process is slated as fully on-device, which Dr. Hou touts as advantageous in terms of connectivity-independence, reliability, speed and, possibly most importantly, privacy. The VP also touts further potential applications of this on-device AI that include image editing, resolution upscaling, re-styling and much more.

On the other hand, Dr. Lou also indicates that they should really take effect "with the next-gen Snapdragon"; therefore, one might need to wait until 2024 at the least for an Android device that can act as an art-generating machine by itself without the need for the internet.

Buy a Samsung Galaxy S23 Ultra on Amazon