Back in February 2020, the first leaks for the AMD MI100 Instinct compute GPU codenamed Arcturus were claiming that AMD’s upcoming high-performance computing processor would get 32 GB of HBM2 memory, yet all that VRAM would not really mean too much because the rumored core frequencies seemed too low. Thus the expected performance for the upcoming MI100 GPU appeared to be somewhere between an RTX 2080 Super and an RTX 2080 Ti. Thanks to a new leak posted by Adored TV, we now get a better understanding of how the MI100 GPU may perform, and it looks like the first leaks were off by a significant margin.

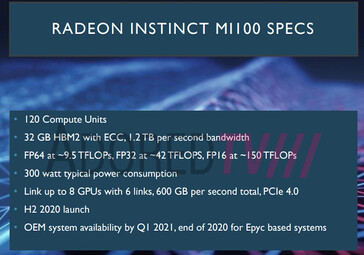

Adored TV reiterates that the MI100 will get 32 HBM2 VRAM with ECC resulting in 1.2 TB/s bandwidth, but the number of compute units now appears to be 120. We are not sure if the CDNA architecture is similar to the RDNA one as far as the core per compute unit count goes, so if we assume that CDNA is similar to RDNA, 120 CU would mean 7680 cores. However, CDNA may be different and the number of cores could be higher or lower. In any situation, the leaked performance specs for the MI100 GPU appear to be way higher than even Nvidia’s A100 Ampere compute GPU on which the RTX 3000 gaming GPU models are based.

According to the leaked slides, the MI100 is more than 100% faster than the Nvidia A100 in FP32 workloads, boasting almost 42 TFLOPs of processing power versus A100’s 19.5 TFLOPs. Previous leaks also claimed that the TGP was set to 200 W, but the latest leak shows 300 W, which means the core clocks can clearly be upped by a fair amount. In this case, the MI100 either has 7680 cores running at 2.75 GHz, or 15360 cores running at ~1.37 GHz. The latter configuration would be more probable judging by the lower clocks, but the number of cores seems much too high.

There is also a features slide that mentions that the MI100 is indeed better than the A100 when it comes to single precision workloads, but that would be the only advantage of the AMD GPU. The MI100 compute GPUs will target HPC, AI and machine learning applications for the oil/gas and academic markets. Additionally, we learn that the compute GPU is compatible with current server-grade EPYC Rome and Milan CPUs from AMD plus Xeon CPUs from Intel. AMD intends to launch two configurations:

- 1U with 4x MI100 GPUs and 2 EPYC/Xeon CPUs expected to be available in December this year

- 3U with 8x MI100 GPUs and 2 EPYC CPUs launching march 2021.