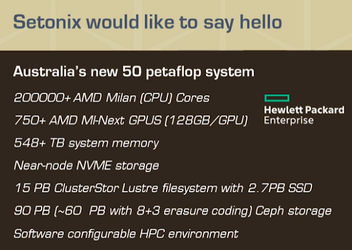

AMD’s upcoming Instinct MI200 compute GPUs are expected to be featured in the Setonix supercomputer built by Pawsey SuperComputing, as revealed by the Australian company’s CTO Ugo Veretto at ISC 2021. In addition to the 750+ MI200 compute GPUs, Setonix will also integrate 200,000+ AMD EPYC Milan CPUs based on the Zen 3 microarchitecture. The CPU cluster will benefit from 548+ TB of system RAM, while each compute GPU will pack 128 GB of HBM2e RAM. Peak performance for Setonix is around 50 Petaflops, and the whole project that cost $70 million will help Pawsey improve calculations for large data set ingestions, data visualization, data lifecycle management, and data sharing.

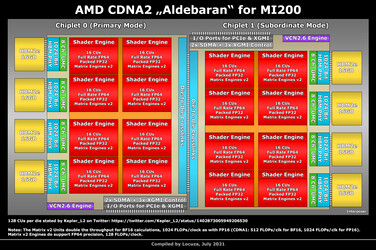

From previous leaks, we were suspecting that the upcoming Mi200 codenamed Aldebaran could feature a multi-chip-module approach, but the jump from 32 to 128 GB of HBM2e RAM is quite unexpected. Just recently, Twitter user Locuza posted a CDNA2 MI200 GPU diagram showing compute unit counts and HBM2e distribution. It looks like this will be AMD's largest GPU yet, consisting of two interconnected dies, each with 8x 16 compute units and 4x 1024-bit 16 GB of VRAM. The diagram notes that the Matrix Engines v2 essentially double the BF16 and FP16 throughputs from MI100, allowing for 1024 FLOPs per clock. There is also support for FP64 and the packed FP32 throughput is increased to 128 FLOPs/clock. In a following tweet, Locuza mentions that the MI200 GPU and the EPYC Milan CPU feature a new architecture that enables shared memory address space between the two chips.

CDNA2 and RDNA3 should have quite a few things in common, so the next gen gaming GPUs from AMD could maybe come with increased VRAM capacities, as well. Most likely 32 GB maximum, plus significant upgrades for the Infinity Cache capacities.