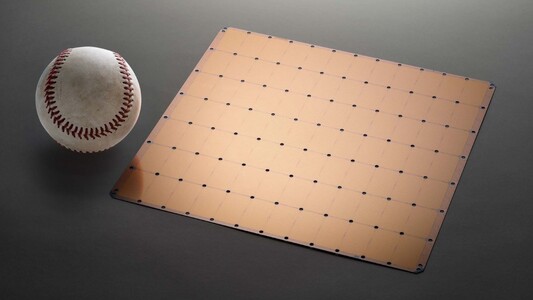

The latest smartphone SoCs usually try to cram as many transistors as possible in chips that are no larger than a normal-size coin, while computer processors and GPUs tend to be slightly larger and also require quite a bit more wattage to operate. The miniaturization process also helps to keep things locked into certain sizes, while increasing the transistor number and adding more on-chip memory. However, a relatively new company called Cerebras System specializing in complex artificial intelligence applications believes that bigger is better, at least for machine learning purposes. How big is bigger, though? How about the largest chip ever made?

Employing the proprietary Wafer Scale Engine, the gargantuan chip measures 46,255 square-mm (almost 56 times larger than Nvidia’s Tesla V100 server GPU) and integrates more than 1.2 trillion transistors. Thanks to an impressive cluster of 400,000 AI-optimized cores coupled with 18 gigabytes of on-chip SRAM, offering 9 PByte/s memory bandwidth and 100 Pbit/s fabric bandwidth, the Cerebras chip can reduce processing times from months to mere minutes. The Wafer Scale Engine allows Cerebras to use an entire wafer as a single massive chip, which also boosts the core interconnect speeds dramatically.

Cerebras explained at this year's Hot Chips conference that it is using a custom TSMC’s 16 nm fabrication method that allows to print a continuous design, and the company had to add extra backup cores in order to mitigate the occasional defects that occur with wafer production. Cooling such a large chip is no simple task, as the die comes with a 15 KW TDP rating. Air-cooling is obviously not ideal for these sizes, so Cerebras is recommending a proprietary system made of vertically-mounted water pipes.

No pricing info was revealed of of yet, but Cerebras mentioned that it will only ship the chip to a handful of customers that are willing to buy custom-made servers built around the chip.

Loading Comments

I first stepped into the wondrous IT&C world when I was around seven years old. I was instantly fascinated by computerized graphics, whether they were from games or 3D applications like 3D Max. I'm also an avid reader of science fiction, an astrophysics aficionado, and a crypto geek. I started writing PC-related articles for Softpedia and a few blogs back in 2006. I joined the Notebookcheck team in the summer of 2017 and am currently a senior tech writer mostly covering processor, GPU, and laptop news.

> Expert Reviews and News on Laptops, Smartphones and Tech Innovations > News > News Archive > Newsarchive 2019 08 > Cerebras breaks trillion transistor count with chip larger than an iPad

Bogdan Solca, 2019-08-21 (Update: 2026-02-18)