In a somewhat unusual move, Apple has preannounced new features that will be coming to iOS 17 ahead of its official announcement. The marquee additions include Live Speech and Personal Voice Advanced Speech Accessibility, Assistive Access to support users with cognitive disabilities, and a new Detection Mode in Magnifier to assist users who are vision impaired.

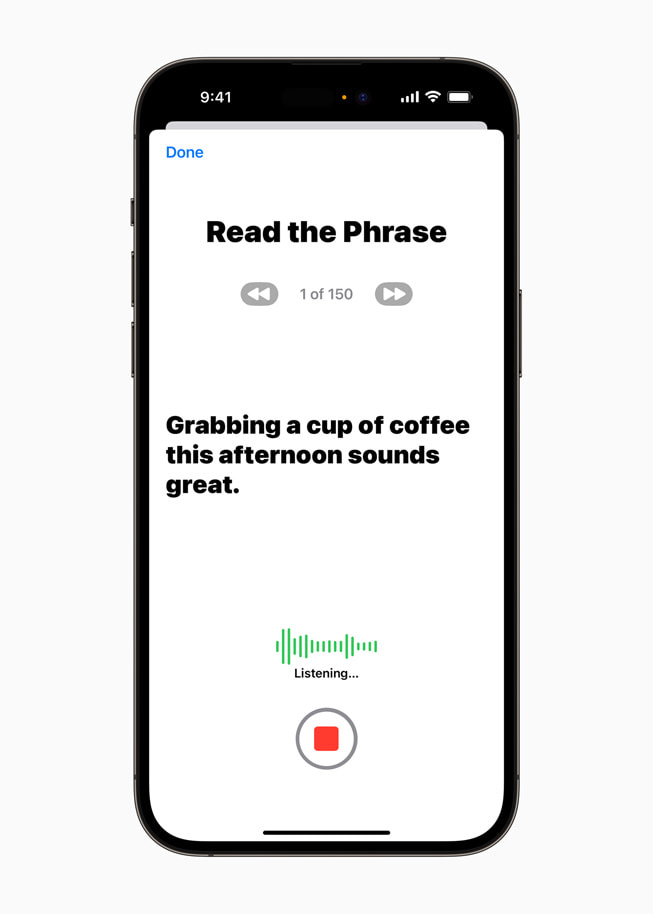

Perhaps the most futuristic of these is Personal Voice, which uses on-device machine learning (AI) to allow users at risk of losing their ability to speak (such as someone recently diagnosed with ALS) to create a voice that sounds like them. Users create their Person Voice by training the AI model to recreate their voice by reading along with a randomized set of text prompts that takes around 15 minutes to complete. This integrates with the new Live Speech functionality that allows users to type what they want to say and have it spoken out loud during a phone call, a FaceTime call or an in-person conversation.

Assistive Access has been designed to take popular iPhone apps and distill them down to their most essential features making them easier to use and interact with. Apple has worked with users with cognitive disabilities and their supporters to make more accessible apps including Phone and FaceTime by turning them into a single Calls app, while also streamlining Messages, Camera, Photos and Music. The aim of the changes is to reduce the cognitive load on users so they can more easily get what they want done or enjoy their iPhone experience with substantially less hassle.

Apple has also previewed Detection Mode in the Magnifier app - another AI-powered tool - that introduces Point and Speak to make it easier for users who are visually impaired to interact with physical objects in their house or around them that have several text labels. For example, when using a household appliance like a microwave oven, Point and Speak combines inputs from the camera and the LiDAR Scanner to read out the text on each button as users move their fingers across their oven’s keypad. Point and Speak also works with People Detection, Door Detection and Image Descriptors to help users more easily navigate their environments.

While these early previews of new accessibility features coming to iOS 17 are indicative that WWDC 2023 will be packed with announcements, it is clear Apple also wants to show that it is not being left behind when it comes to introducing AI-powered features to its products.

Purchase the latest iPhone SE from Amazon unlocked and renewed for $399.