A general roll-out of iOS 15.2 is projected to reach eligible iPhones soon. It may contain the first of a panel of new features that have drawn criticism of their potential effects on privacy and freedom from surveillance. However, Mark Gurman now predicts that they have been modulated in ways that might be directed at addressing these issues.

The update in question is to the Messages app under 15.2, in which the Cupertino giant's measures described as an attempt to curb child sexual abuse material (CSAM) are applied in part. It involves the ability to scan devices registered as used by children for images of naked people.

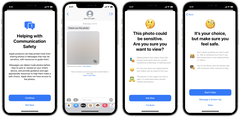

Should such a 'nude' be detected, the image shows up in the app as blurred-out, and throws up screens with buttons that allow for a confirmation that the user consents to see it, then one with options including messaging a "grown-up" about it.

Despite the potentially pervasive nature of the AI intervention involved, Gurman asserts that the feature will be "opt-in and on-device" in nature. Besides this, iOS 15.2 is now projected to offer the expanded "privacy report" that should outline which apps used hardware such as mics, cameras and GPS predictions, as well as per-website network activity.

The new iOS update might also unlock Digital Legacy, in which users can nominate others to be granted access to their accounts on their death. It may also confer Hide My Email, by which iCloud subscribers can send messages from a random address rather than their own.

iOS 15.2 betas have been in circulation for some time now, meaning that its stable public OTA can't be far away now.

Buy a 128GB iPhone 12 Pro Max in Pacific Blue (Renewed) on Amazon