Project Hail Mary makes a strong case for physical buttons in tech

Anubhav Sharma 👁 Published

THIS ARTICLE CONTAINS SPOILERS

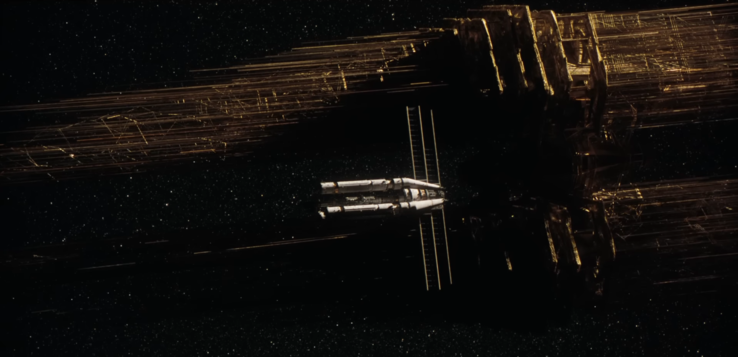

When Ryland Grace wakes up on the Hail Mary (the ship he's on), the first thing he sees isn't a glass cockpit or a holographic interface. Instead, the ship is extensively made of toggle switches, physical levers, and buttons that require actual force to operate. This design choice by Phil Lord and Christopher Miller isn't merely referencing the "used future" vibes of Ridley Scott’s Aliens or Interstellar. As I was watching Project Hail Mary, I couldn't ignore the importance of these controls as an anchor for a character who has lost his memory and needs the world to feel "real" again. In a high-stakes environment such as this, where a random software glitch or a cracked display could mean the end of existence, the film shows physical hardware as the ultimate form of reliability. Let me tell you why I think so, from the perspective of someone who hasn't even read the book (yet).

An FYI: Multiple other movies have done this in the past, and I've referenced some of them above. Project Hail Mary is just the newest one in the list, that's all.

The technical setup of the Hail Mary is a big "screw you" to current aerospace trends. If you look at the SpaceX Dragon capsule, the interface is almost entirely touchscreen-driven. While this looks sleek and minimal on a livestream or press photos, it lacks the haptic certainty needed during an emergency. In the movie, Grace often operates controls without looking at them, relying on pure muscle memory and the "clicks" of mechanical switches. The closest example I can think of is the real-world Boeing Starliner, which has more physical switches for critical systems than its competitors.

For a character struggling with amnesia, the world needs to feel visceral. In a high-stakes environment such as this movie, where a random software glitch or a cracked display could mean the end of human existence, the film shows us that physical hardware is the ultimate form of reliability, without doubt. The movie even uses this logic in regard to the alien technology. Rocky’s ship, despite being built from exotic materials like xenonite, uses vibrational and musical interfaces (I'm not very sure, but that's what they looked like) for constant feedback to its pilot.

Not like we need tangible evidence to prove something this basic, but still, real-world data backs this pro "old-school" approach in Project Hail Mary quite well. According to a pilot interview published by Hush-Kit, F-35 "Panther" pilots have reported a miss rate of nearly 20% when attempting to use touchscreens under high-G loads or during heavy turbulence. This struggle is seen in academic research as well, specifically the late-2025 study from ResearchGate titled The Impact of Tangibility in the Input of Secondary Car Controls. The data showed that 80% of participants committed input errors when using touchscreens in high-stress simulations. On the other hand, there was a way lower 20% error rate for those who used physical buttons.

This performance gap has major consequences for everyday safety and efficiency. A benchmark test by the Swedish publication Vi Bilägare demonstrated that performing basic tasks in a button-heavy 2005 Volvo took just 10 seconds, while the same operations on a modern touchscreen-only interface stretched to 44.6 seconds. This four-fold increase in distraction time is exactly why Euro NCAP updated its safety protocols for January 2026. The organization now officially penalizes manufacturers that fail to provide physical controls for essential functions like wipers, turn signals, and hazard lights. The industry is beginning to realize that "clean" design is more than often a front for inefficiency. Even Apple and Tesla have begun adding physical controls back into their latest hardware - some things simply shouldn't be hidden in a menu.

When you use a touchscreen, your brain has to dedicate visual resources to make sure your finger is hovering over the correct pixel. For instance, in Project Hail Mary, Grace can keep his eyes on the Petrova Task data while his hand moves around to find the cooling system lever, freeing up one sense entirely. We've seen this level of human-machine synergy in movies like 2001: A Space Odyssey, but one that we’ve largely forgotten in the pursuit of making everything look like an iPad (no shade here). Even TARS from Interstellar was a physical, blocky unit that interacted with the world through movement and weight, rather than just being a voice in a speaker. When tech becomes too ethereal, we kind of lose our sense of agency over it.

The main takeaway for those of us not currently on a mission to Tau Ceti is that our tools should work for us, not the other way around. If a film about one of the most advanced spacecrafts ever built tells us that a physical dial is the pinnacle of technology, perhaps we should stop accepting the "death of the button" in our laptops and cars. Tactile feedback is more than just satisfying clicks; it’s about losing the friction between a human thought and a mechanical action. As opinions go, this might be a hill worth dying on: the most advanced UI is the one you can operate with your eyes closed.

And let's be honest, Rocky would NOT have been able to turn off the centrifugal spin to save Grace if it was a touchscreen instead of a lever. Just saying.

Source(s)

Own, Amazon MGM Studios (YouTube), Team-BHP, Car and Driver, Researchgate, Hush-kit