Earlier this week at GTC 2018 held in Japan, Jensen Huang presented the first Tesla real-time inference accelerator based on the Turing architecture. With the latest deep learning and neural network advancements touted through the Turing Tensor Cores, Nvidia’s latest Tesla T4 GPU is aimed at accelerating a diverse array of modern AI applications.

According to Nvidia, the new Tesla T4 cards are designed to offer maximum efficiency for scale-out servers. For this purpose, the GPUs come packaged in an-energy-efficient 75-watt PCIe form factor. The Tesla T4 is optimized for hardware video transcoding loads and is meant to improve online video analysis algorithms. In this respect, the T4 can decode up to 38 concurrent 1080p video streams, offering increased performance for smart video services based on deep learning frameworks.

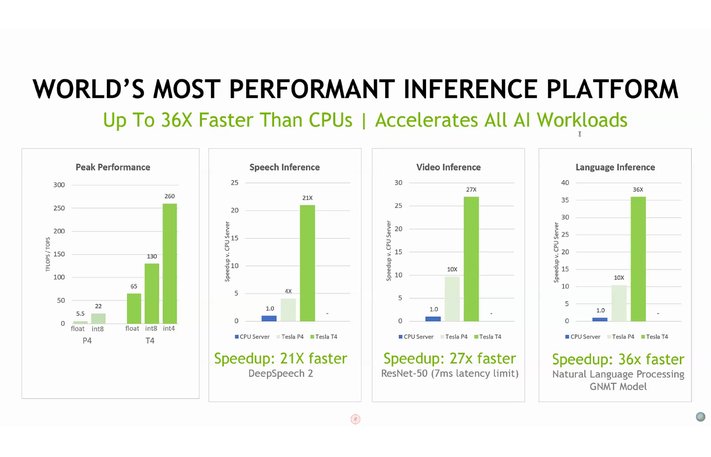

Even though the Tesla T4 has a maximum TDP of 75 W, the hardware specifications are nothing to sneeze at. It integrates a TU104 GPU (similar to the one found in the RTX 2080 gaming cards) with 2560 CUDA cores and 320 Tensor Cores, plus it packs 16 GB of GDDR6 VRAM that allows for up to 320 GB/s of bandwidth. These specs can deliver 8.1 TFLOPS of FP32, 65 TFLOPS of FP16, 130 TOPs of INT8 and 260 TOPs of INT4 performance. The small form factor and the reduced TDP make it easy for enterprise clients to add these cards to 1U and 4U server racks.

Availability and price information are unknown for the time being.

Loading Comments

Previous article

AMD and Microsoft working on next gen cloud gaming consoleI first stepped into the wondrous IT&C world when I was around seven years old. I was instantly fascinated by computerized graphics, whether they were from games or 3D applications like 3D Max. I'm also an avid reader of science fiction, an astrophysics aficionado, and a crypto geek. I started writing PC-related articles for Softpedia and a few blogs back in 2006. I joined the Notebookcheck team in the summer of 2017 and am currently a senior tech writer mostly covering processor, GPU, and laptop news.

> Expert Reviews and News on Laptops, Smartphones and Tech Innovations > News > News Archive > Newsarchive 2018 09 > Nvidia unveils Tesla T4 inferencing GPU based on Turing

Bogdan Solca, 2018-09-14 (Update: 2026-02-18)