Nvidia’s stranglehold on AI accelerators is mostly due to the software side that has been programmed for the CUDA libraries. AMD’s ROCm platform represents a viable alternative, but not too many software developers are willing to recode from scratch. Thankfully, thanks to AMD's endeavors over the past few years, there is a solution that allows ROCm to support CUDA code via an open-source porting project called ZLUDA.

Initially, the developers of ZLUDA started out in 2020 by porting CUDA for Intel’s GPUs, but the endeavor was met with technical difficulties so it was put on indefinite pause. In 2022, AMD contacted the head of the project, Andrzey Janik, and up until recently, ZLUDA focused on Radeon GPUs. However, for unknown reasons, AMD decided to stop funding this project and terminate the contract with Janik a few months ago. Fortunately, Janik included a clause that would allow him to publish the code as open-source if termination was enforced.

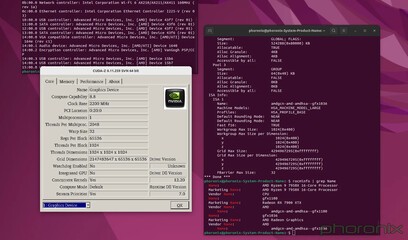

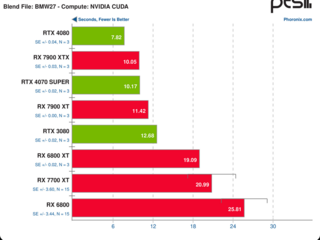

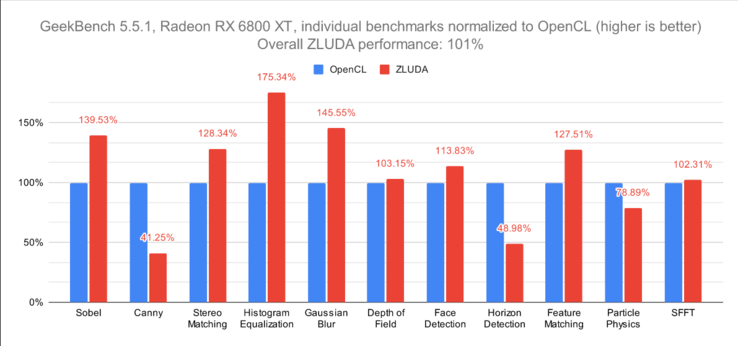

From the tests conducted by Phoronix, it looks like CUDA applications can run almost at native performance on ZLUDA with no recoding required. As noted by Phoronix, even proprietary CUDA renderers can work on Radeon GPUs now. There are still some features that are not entirely supported, such as the Nvidia OptiX or the PTX assembly code. The project has Apache 2.0 and MIT licences, plus it supports the Rust programming language.

While AMD might not provide official support for CUDA, developers can now use ZLUDA on all AMD GPUs, including the Instinct MI300 AI accelerators. If third party developers continue to improve ZLUDA to fully support all CUDA features, we might soon see an increased demand for AMD’s GPUs as an alternative for Nvidia’s AI accelerators that are now very hard to procure.

Buy the XFX Speedster MERC310 AMD Radeon RX 7900XT Black Gaming GPU on Amazon