The not-so-Universal Serial Bus

The "U" in USB stands for "universal," so - cynically speaking - it's only fitting that there are several versions, with various differences, that may or may not be backwards- and cross-compatible, and might even interfere with the performance of unrelated devices while potentially compromising security. If you've given up on or even just been flustered before by the seemingly arbitrary way USB protocols (among other features) are marketed, you're not alone; a lot of unscrupulous online resellers have no qualms trying to leverage that confusion into a sale. One of the first and most obvious disconnects is between the version of the standard, e.g. 2.0 or SuperSpeed, versus popular plug form factors such as MicroUSB and Type-C, and separating these two parts of the USB standard is the first step to demystifying the naming scheme.

There are currently three "Types" of USB plug formats on the market, known as A, B, and C, but Type-A and Type-B are divided into subtypes such as Micro and Mini. Most consumers will run into primarily three formats far more than the rest: Type-A, Micro-B, and Type-C, but that's not to say there aren't others commonly found in the wild. Type-A, of course, shares a form factor with the earliest USB plugs. There is a USB Micro-A plug, but Micro-B caught on as soon as the protocol began to support increased throughput rates. As of 2020, it's still in use by a huge range of portable electronics, but it's slowly losing ground to the next generation of USB, Type-C.

While types A and B are incredibly widespread among all sorts of devices, they have a few drawbacks. Most obviously, they're not reversible, but additionally, Micro-B has been criticized for its durability, and both of the common plug formats have just about reached their limits as far as bandwidth goes. USB-C blows right past all three of those limitations, offering significantly increased bandwidth (which we'll talk about shortly), improved durability, and reversibility in all situations. It's only major drawback is that it's been somewhat slow to adopt given the ubiquity of the older plugs, but it's becoming easier and easier to find your favorite gadgets equipped with this versatile technology.

The physical configuration is, of course, only one half of the issue. The standards used to communicate over this cord-and-connector combo have also continued to evolve, from the ultra-common USB 2.0 (with a ceiling of 480 Mbps) to the USB 3.0 standard, also known as SuperSpeed USB, also known as USB 3.1 Gen 1, which offers up to 5 Gbps, exactly 10 times the speed of 2.0. Confused? So is everybody else.

What not everyone is aware of, however, is one of the few negatives that actually popped up when USB 3.0 was developed. Because of the additional noise generated by its wiring, connectors and hubs tend to disrupt 2.4-gHz transmissions, which is something you'll need to keep in mind if you're connecting any kind of wireless controller to or near a SuperSpeed USB connector. Generally speaking, the better a product's engineering and construction are, the better the shielding will be, and the less interference you'll experience, but with some devices you'll still need to be aware of this issue. USB 3.1 Gen 2, which has supposedly been renamed USB 3.2 by the USB-IF board, doubles the bandwidth to 10 Gbps, and although it's not terribly popular, it is natively support by a variety of motherboards.

In the course of advancing both the plug form factors and transmission protocols, Type-C has begun to emerge as the obvious future of the standard. It combines the durability of Type-A with the compact size of Micro-B, and, of course, it's reversible. It's fully backward-compatible with all the older USB standards and it's currently used by the increasingly popular Thunderbolt 3 standard, which offers a whopping 40 Gbps of throughput. Interestingly, though TB3 was originally developed by Intel, they've pledged to drop the proprietary licensing, thus lending the Thunderbolt 3 protocol to the development of the public USB 4 standard, which will presumably maintain that 40 Gbps bandwidth ceiling as well as the use of the Type-C connector. This also provides Intel with a pathway to producing Thunderbolt 4, which will undoubtedly have even more bandwidth and will still run on the Type-C platform.

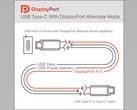

Type-C provides additional versatility in its ability to transmit a variety of signals normally bound to other plug and cable formats. USB Alt Mode defines transmission of both DisplayPort and HDMI signals, enabling video output using both dongles and direct USB-C to USB-C connections. Thunderbolt 3, and by extension the upcoming USB 4, take this a step further by allowing users with TB3 and USB 4-enabled ports to connect combination monitors and USB 3.1 hubs to desktops and laptops without sacrificing any visual fidelity or USB 3.1 bandwidth. This is especially convenient for power users with high-end monitors, turning those advanced displays into de facto docking stations complete with USB-C Power Delivery.

Power Delivery, or USB PD, is an increasingly important standard able to deliver up to 100 watts of smart charging to a huge range of devices. It's a massive leap over the comparatively low-wattage power that Type-A and Micro-B are capable of, and a lot of modern laptops can be driven solely by a USB PD power cable, eliminating the need to carry hefty power bricks. The same exact connector and charging protocol can refuel smartphone batteries almost or exactly as fast as the top-level OEM chargers meant for the same purpose. USB Power Delivery is yet another reason that the Type-C protocol is poised to completely take over the connector field over the coming years.

Bluetooth marketing, caught red-handed?

It's one of the most widely used and important features of the mobile experience, and like USB, it's used by a massive number of devices. Its most common implementation is wireless audio transmission, which is something we'll get into shortly, but in a way, its most prominent consumer-facing aspect is its version number. The overwhelming majority of laptops, tablets, smartphones have implemented at least version 4.0 to its full extent, with many adopting version 4.2 even though it doesn't really have any noticeable improvements for everyday users. The issue with today's Bluetooth marketing falls partially on the shoulders of the Bluetooth Special Interest Group, or SIG, partially on manufacturers, and partially on marketing departments.

You may have seen the term "Bluetooth 5.0" used in reference to the capabilities of quite a few phones and laptops. The three most important improvements it offers over version 4.x are essentially more powerful physical layer (essentially a more sensitive radio), improved data transmission speeds, and optimized advertising methods that allow devices to identify each other, communicate, and connect more efficiently and reliably. When it comes to real-world usage, though, there's more than meets the eye, and it's not exactly positive or consumer-friendly. In order for a manufacturer to declare Bluetooth 5.0 support, they aren't actually required to implement it fully. The end result of this is that despite a wide range of new smartphones and even some headphones and speakers advertising Bluetooth 5.0 compatibility, it's more than a little clouded as to which parts of the protocol they've adopted. There's speculation that this is one of the reasons that big-name headphones manufacturers such as Sony and Bose have hesitated to embrace the version 5.0 branding.

Nonetheless, the implementation as a whole is significantly more effective than previous versions, and in many ways it can be considered at least twice as powerful. It offers double the bandwidth, significantly increased range, and the ability to trade one of these off for the other at predetermined rates in devices that need extra-high data rates or extra-long-range transmission. It allows for not only multi-point connections, which is something Bluetooth 4.0 could also do with select devices; version 5.0 even allows for simultaneous transmission, as long as all links in the chain support it. It also features enhanced security and allows for mesh connections between multiple sinks, both of which are extremely meaningful for anyone who's into the Internet of Things and all the handy, fun, and weird creations that come with it. Of course, the most popular reason to use it will probably still be for music listening, which offers one more level of confusing and sometimes misleading standards.

It's not uncommon to see audiophiles and tech enthusiasts bash Bluetooth in general for delivering poor audio quality. While it is true that it absolutely cannot technically match a wired connection, it's not nearly as simple as saying, "Bluetooth has bad sound quality." Audio transmission is governed by a codec (a portmanteau of coder and decoder) and there are several commonly in use in the current Bluetooth ecosystem. The most basic, known as SBC, was designed to deliver passable audio quality without requiring much bandwidth. Every wireless audio device on the market supports SBC as a basic rule, but when it comes to fidelity, there is definitely a noticeable decrease when using SBC vs. a wired connection or a more effective codec. Other methods tend to use more efficient compression algorithms. The most popular of these include Qualcomm's aptX and aptX HD codecs, which claim to produce CD-like and better-than-CD audio quality, and Apple's proprietary AAC encoding, which subjective observation places somewhere around the quality of aptX or aptX HD.

One minor drawback of high-bitrate codecs is a slightly higher bandwidth requirement, which can lead to connection issues in places where the 2.4-gHz band is highly congested. Starting with Android 8.0, Sony lobbied for native support of its LDAC codec, which offers significantly higher maximum resolution than any others, but here's where it gets even more tricky. LDAC offers three bandwidth levels - 330, 660, and 990 kbps - and at the highest rate can suffer noticeably more stuttering and cutting out in congested areas. It does, however, allow for dynamic rate adjustment, but technical measurements prove that it's actually not really superior to aptX HD at 330 or 660 kbps. At 660 kbps, the audio quality is essentially a wash vs. aptX HD, and at 330 kbps, it's objectively worse - though not everyone will be able to notice a big difference. Huawei's LHDC is a direct competitor to LDAC, and Qualcomm's aptX adaptive is slated to offer better dynamic rate scaling and thus can better overcome congestion, but neither of those has been adopted by any major speakers or headphones as of yet.

To really encapsulate the Bluetooth audio quality debate, here's one solid, controversial statement. For the overwhelming majority of users, Bluetooth is not only a completely suitable music listening solution, it can actually be entirely indistinguishable from a wired connection. As mentioned above, this does not hold true when using the base-level SBC codec. To illustrate the point, though, connect a pair of high-quality headphones to a recent Android smartphone using Bluetooth, and, using the developer settings, try manually switching between codecs, and comparing those to wired sound quality. It's very likely that you'll easily notice a difference in sound quality between SBC and, for example, aptX HD, even if you don't have highly trained ears.

If you're using a pair of headphones with LDAC compatibility, though, you'll almost certainly be hard-pressed to notice a difference between the top-level 900-kbps LDAC audio quality and that of aptX HD or even aptX, for many users, as long as you're not surrounded by a bunch of other devices with active Wi-Fi or Bluetooth connections. Add to that the fact that people rarely, if ever use Bluetooth headphones in perfectly silent environments, and the need for a technically lossless wireless codec all but evaporates. Furthermore, most streaming services and audio collections simply don't provide a high enough bit or sample rate to even take advantage of lossless connectivity.

This may not apply to the pickiest audiophiles, who utilize high-powered headphone amps and technology like planar magnetic or electrostatic drivers, but for most people, Bluetooth is absolutely sufficient, and won't degrade audio fidelity in any noticeable way. You'll definitely find myriad exposes all over the Internet measuring rolloff, spectrum consistency, and distortion, but remember that these objective measurements, which are taken using sophisticated microphones and debugging tools, really don't apply directly to human ears and usage patterns. So, in the end, it's safe to say that Bluetooth audio transmission is a reliable and useful technology, and of course, while it is a shame that manufacturers often eliminate a physical jack entirely, Bluetooth headphones and speakers are certainly a suitable substitute for most people.

Bigger, faster, stronger HDMI

The High Definition Media Interface has been adopted by the entire home entertainment sector, and it's a very significant departure from the composite and component connections of yesteryear. Version 1.4 was a major update made in 2009 that has become ubiquitous among TVs, home theater receivers, game consoles, and graphics cards, and it can support resolutions up to 3,840 by 2,160 at 30 hertz. That accommodates much of the content still produced by major studios, in part because modern film and TV making methods actually take advantage of the accepted 24 FPS standard.

Sure, you'll find enthusiasts who claim that high frame rate entertainment is inarguably better, but when it comes to movies and TV shows, it's actually and unequivocally not. For one thing, there is no artificial lighting rig in the world that can accurately simulate sunshine and other real-life lighting, and at high frame rates, this becomes considerably more noticeable. Second, when you're watching a film or TV show, what you don't pay attention to is almost as important as what you do see and focus on. High frame rate filming causes background details and artificial lighting to become so overly noticeable as to remind the viewer that they are, in fact, watching a performance by actors on a stage. If you don't believe that, just go watch The Hobbit with its 48 FPS screening and then truly consider if it's more immersive than the traditional 24 FPS production.

On the other hand, that certainly does not apply to animated or computer-generated graphics. Where live-action films such as The Hobbit look strange and unreal at 48 FPS, animated titles like How to Train Your Dragon look absolutely stellar when interpolated to 60 FPS. Similarly, broadcast real-world events - in particular, live sporting events of every kind - also look fantastic when viewed at 60 Hz. To reach 60 Hz at 4K, though, you'll need to make sure you have an HDMI 2.0 input on your TV or monitor. Also, naturally, it's impossible to argue that PC games aren't significantly more enjoyable at 120 FPS than at 60 FPS.

So, despite the irrelevance to some media formats, the industry presses ever forward, and HDMI 2.1 is very close on the horizon with a handful of important changes. Granted, as of this writing, there aren't actually any HDMI 2.1 sources available, but with the announcement of Sony and Microsoft's next-gen consoles, the need for the improved standard is apparent. Also, we'll ideally see HDMI 2.1 outputs on Nvidia's RTX 3000 and AMD's Big Navi next-generation PC GPUs, which would enable computer gamers to take advantage of modern 4K TVs better than ever, in part because the HDMI forum and its members have no interest in adding DisplayPort connectivity and its currently superior compression algorithms to TVs anytime soon.

HDMI 2.1 offers several distinct and important upgrades over 2.0, and though, like Bluetooth 5.0, manufacturers aren't required to implement all of them, even having just one or two can make a difference for many consumers. First and foremost is High Frame Rate support that allows for 4K resolutions at up to 120 hertz due to the jump in bandwidth from 18 Gbps with 2.0 to 48 Gbps in 2.1. This is also enough for 8K at 60 hertz, although 8K video will probably remain a niche technology for quite some time due to the high cost of 8K displays and the lack of available media.

Of particular importance to gamers is industry-standard Variable Refresh Rate, or VRR, which promises a more consistent and reliable alternative to the relatively proprietary G-Sync and FreeSync technologies offered by Nvidia and AMD. Variable refresh rates eliminate the need for a locked frame rate via Vsync, allowing for smooth frame transitions regardless of frame rate changes, preventing screen tearing and most ghosting. It remains to be seen for certain if Nvidia and AMD's new GPUs will support this HDMI-standard VRR, but desktop gamers remain extremely hopeful that this will be the case. Also important to fans of interactive entertainment is Auto Low Latency Mode, which detects connections to compatible consoles and other devices and alters settings to minimize input lag without noticeably compromising image quality.

One very promising part of HDMI 2.1, Dynamic HDR metadata, can also be traced to increased bandwidth and decreased latency. HDMI is, at present, not nearly as effective and seemingly magical as it's made out to be; this is due to a number of reasons, some of which include the failure of even high-end local dimming to perform on the desired level. Dynamic metadata aims to help the situation by allowing displays to alter local brightness almost instantly on a scene-by-scene or even frame-by-frame basis, which, along with the imminent release of Mini and MicroLED panels, can hopefully push HDR into the mainstream as a technology that's actually worth investing in. Finally, home theater buffs will appreciate Enhanced Audio Return Channel technology, or eARC, which improves upon the bandwidth of ARC and allows for 7.1-channel surround sound without requiring an extra cable, and adds more efficient device handshake capabilities to provide for improved ease of use.

The future of connectivity

Do you have experience with brand new USB-C devices? We're particularly interested in how well the variously branded chargers and hubs work with high-draw USB-C powered devices using Power Delivery, and how quickly USB PD charges high-end smartphones. We'd also love to hear how absurd is the idea that Bluetooth actually provides great audio quality that most users will be quite happy with. In our experience, the objective measurements provided by diehard anti-wireless audiophiles just don't always represent real-world listening experiences accurately, but as always, we are excited to find evidence to the contrary. Of course, we're painfully aware of the issues that platforms like Windows often have when connecting with even high-end Bluetooth devices. We're also extremely curious about the feelings towards HDMI 2.1 adoption by mainstream sources like consoles and next-gen GPUs. Please let us know in the comments what you expect from all of these new and constantly advancing standards!