Nvidia has launched its latest Grace Hopper superchip designed to power data centers that power generative AI or other accelerated computations. Although Nvidia continues to make Arm-based chips for consumers in different forms, it pivoted some years ago towards prioritizing Arm-based server SoCs that can be further accelerated by integrating its Grace Hopper platform with its server-class GPUs. The company’s bet has paid off in a big way following the rise of generative AI technologies including ChatGPT and Midjourney among others which has seen its share price surge around 200 percent since the start of the year.

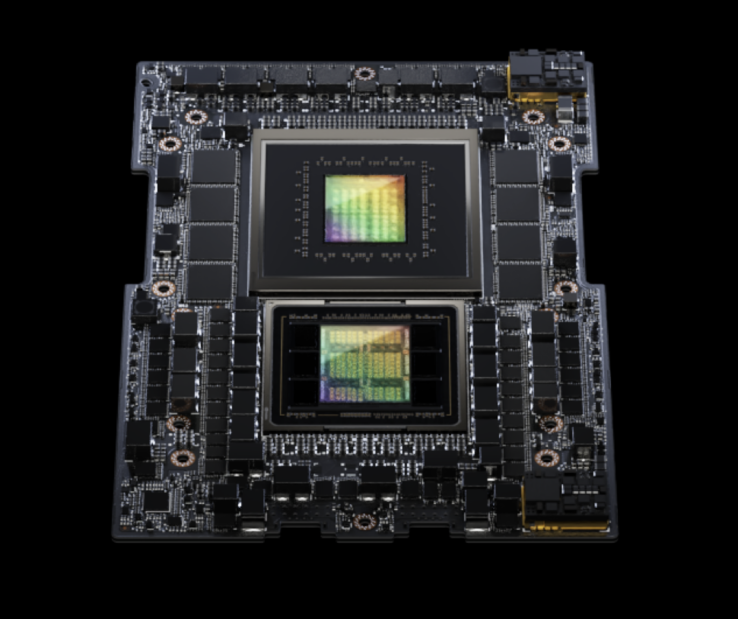

As with the original Grace Hopper superchip, the new GH200 in dual configuration ramps things up to 144 Arm V-series Neoverse cores. Also as with before, the CPU (Grace) is paired with Nvidia’s H100 Tensor Core GPU (Hopper). The H100 GPUs can deliver a minimum of 51 teraflops of FP32 compute with the combined GH200 architecture good for an impressive 8 petaflops of AI performance.

To reach these new performance levels with the GH200 -- despite keeping the silicon architecture unchanged -- Nvidia has upgraded the memory architecture with 282GB of the latest HBM3e memory. HBM3e is 50 percent faster than regular HBM3 memory with a total of 10TB/sec of bandwidth. This allows the GH200 to process models that are 3.5x larger than the first-gen chip and makes it first in the world to feature HBM3e memory.

Nvidia also says that the Grace Hopper platform is scalable with support for additional Grace Hopper chips to be connected using Nvidia’s NVLink interconnect. Coherent memory (shared between all the CPUs and GPUs in the cluster) can reach up to 1.2TB. Nvidia says that leading server makers are expected to deliver systems running GH200 configurations in over 100 server variations in Q2 2024.

Purchase the Nvidia Jetson Nano AI developer kit from Amazon for $149.