How LED displays work

To understand how HDR works, it helps to have some basic knowledge of how modern LED displays work. As our more technically knowledgeable readers will notice, this is simplified more than a little bit, because a fundamental understanding is all that's really needed to get the gist of how HDR works. LCD panels, which have been the leading panel type for a while (and likely will be for some time), consist of layered components that each fill a very specific role.

The first link in the chain is the backlight, which is located either at the very rear of the panel, or in edge-lit TVs, on the sides, where the light is gathered and transmitted to fill the entire area of the screen using a series of guides. Basic or budget displays have a single-zone backlight that can only be dimmed and brightened all at once.

From the backlight, the light travels through a layer of what's called liquid crystal. To illustrate it in non-technical terms, this layer essentially contains a super-fine mesh laid between two ultra-thin transparent panes. Rather than a consistent square mesh, it's divided into sub-pixels, which we'll get right back to in just a second. This mesh, however, is where the actual "liquid crystal" comes into play. In each of what are basically tiny windows, there's a minuscule bit of matter that exhibits properties of both liquids and solids.

Depending on the type of LCD, these "crystals" (which, by the way, aren't technically crystals nor liquid) hold one shape when there's no electric charge present and change shape almost immediately when the appropriate level of charge is applied. The three main types are vertical alignment or VA, twisted nematic or TN, and in-plane switching or IPS, and they each have advantages and disadvantages in terms of viewing angles, pixel response time, color gamuts, refresh rates, and contrast levels. Ultimately, though, they all accomplish the same goal. Based on information from the graphics processor, they allow more or less light through the layer to determine the specific brightness of each pixel from frame to frame.

After passing through the liquid crystal layer, light moves through color filters divided into red, green, and blue subpixels. You've probably heard of a new technology called quantum dot filtration in the form of Samsung's popular QLED televisions and monitors, as they were the first to the forefront of end-user products. Quantum dot enhancement layers actually take in the light emitted through the color filters and reproduce it in a way that's more true to the color data itself. The result of this is more clear and vibrant colors as well as a reduction, in many cases, of the dreaded "backlight bleed" that turns black into gray and reduces effective contrast. It's not a perfect system, but it does provide a categorical increase in picture quality when implemented properly.

With all that said, there's a less pervasive but very interesting technology called OLED that's gaining more and more popularity. To be clear, LED stands for "light emitting diode," and with regard to LCD displays, it refers to the backlight itself that's made of LEDs. Organic light emitting diodes add different organic substances - that is, certain substances containing carbon - to the subpixel mix, minimizing or eliminating the need for the colors filters used by LCD panels. OLED technology does away with the backlight using pixels that emit their own light, which is why they're called emissive displays. Because of this, each individual pixel is able to shut off entirely, which leads to impressively deep black levels for OLED screens that LCD displays can't quite match.

Because black levels are so important to contrast, this does offer OLEDs an edge in some ways as far as HDR is concerned. However, OLED brightness is not yet able to compete with LCD brightness at the high end, so as with many electronics, there are advantages and disadvantages to both. It should also be noted that some OLED screens, especially those on TVs, don't actually produce all the colors via subpixels and still use color filters similar to those used by LCDs. Smaller displays such as those on smartphones may use RGB subpixels, which can produce bolder and more accurate colors, but TV panels do tend to use color filters. By and large, however, OLEDs usually offer faster pixel response and lower input lag as compared to LCDs.

So what is high dynamic range?

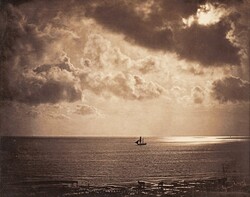

Dynamic contrast, and its modern digital implementation high dynamic range, aren't new as of the 21st century - at least conceptually speaking. It's all about the range of luminance, you see, and as photographers as far back as 1856 were taking multiple still shots of scenes with varying levels of exposure, then editing those together to create a lifelike image that otherwise wouldn't have been possible using the technology of the day. In fact, many famous works by well-known artists like Ansel Adams reached a unique visual appeal thanks to a process known as dodging and burning, a 20th-century method of increasing exposure levels within a single shot while developing film in a darkroom. This was the earliest form of tone mapping.

Tone mapping is the process of taking these absolute brightness and color values and, instead of displaying them based on a fixed frame of reference, comparing them to nearby areas or pixels and adjusting them accordingly, so as to make it look like there's more effective contrast than the original source material provided. Much like Ansel Adams used dodging and burning to make Yosemite Valley look like a dream, modern post-processing algorithms work to make both live-action and animated cinema look as real as possible.

The first color film created for HDR photography was engineered through a contract with the US military, and by 1986, the first method of creating HDR video frames were introduced. In the mid-nineties, a system was created known as Global HDR that vaguely resembled the beginnings of what HDR now refers today. Granted, it was only a conceptual resemblance, but it did take into account luminance contrast across an entire video frame and tonemap the result in order to create an image more vivid than the recording material could.

Try as they might (and it is a noble effort), engineers have yet to develop cameras or display panels that work as well as the human eye. LCD screens, for example, are often prone to light bleed, and although quantum dot filtration has mitigated that somewhat, it can be and is an issue with many panels. Plus, it's not just pixel cross-talk that's a problem; even some of the best LCD panels still allow the backlight to shine through, even in the best of cases.

Modern HDR implementation

Static contrast is a measurement of a panel's brightest brights versus its darkest blacks. It is an important factor in a display's appearance, but it's not the only one that makes a difference when it comes to HDR. Local dimming is one such feature that is all but necessary for a panel to be truly capable of HDR output.

Before local dimming, a TV's entire backlight was fixed at a given brightness for every frame. This allows a panel to lower the brightness level of specific subsections, called dimming zones, when they're displaying blacks or particularly dark shades. In fact, it's safe to say that TVs without local dimming can't accurately claim to support HDR, and the truth is that many large panels with local dimming don't even have enough zones - or an effective enough dimming algorithm - for it to make a huge difference when displaying HDR content.

Brightness, of course, is another of the main things that makes high dynamic range possible, and it's one of the aspects that's held back HDR on quite a few TVs in the recent past. During the first several years of 4K television, a good set averaged just a few nits in real-world testing. For SDR, or standard dynamic range content, 400 nits is a good benchmark for a quality display in a moderately lit room, while real-life readings of 650 nits and above make for good HDR highlights. It's important to mention here that this is in reference to actual testing, as the ratings quoted by manufacturers are often inflated using perfect testing conditions and ideal claims.

Another feature to be aware of is chroma sampling. The eye is more sensitive to changes in brightness than differences of color, so in order to reduce bandwidth requirements, many panels transmit less color data than brightness data. In many cases, subsampling of 4:2:2 is barely distinguishable from an uncompressed 4:4:4 output. With the trend towards more capable panels with wider color gamuts, though, this becomes less true.

The subsampling rate of 4:2:0 is particularly widespread because it was used on a vast range of DVD and Blu-ray products for years (and it's even part of the 4K Blu-ray specifications), but with increased panel technology, its substandard visual fidelity is more apparent than ever. It's particularly noticeable in applications where a crisp static image is of prime importance, for example, when a TV is used as a PC monitor for everyday use tasks, and especially when displaying text.

As mentioned above, a wide color gamut is extremely important to HDR implementation, and 10-bit color depth is a powerful way to move towards a wide color space. A 8-bit panel can produce up to 16.7 million different colors through variations of 256 values each of red, green, and blue. 10-bit panels up that to 1,024 hues per pixel, resulting in a whopping 1.07 billion potential colors. Some manufacturers simulate 10-bit color by using an 8-bit panel with an algorithm that dithers between adjacent colors to simulate the color that would be essentially between them, but that can't be expressed with only 8 bits of data. A true 10-bit panel is one of the basic requirements for high-end HDR capabilities.

Mini-LED screens, which are still a bit away from hitting the shelves, are equipped with backlights that each service only a small number of pixels. In essence, Mini-LED technology is a highly refined form of local dimming. Possibly more ground-breaking, though, is microLED technology, which is pretty similar in concept to OLED panels. In it, each pixel has its own individual backlight, which enables near-perfect black levels and impressive levels of contrast, supposedly without the drawback of low peak brightness that plagues many OLED panels. The major problem with microLED at the moment is that it's still quite far from becoming mainstream, and in fact there aren't currently any consumer products on the market to use it.

Current and future HDR standards

High dynamic range, in its simplest form, isn't regulated or enforced in any official way, but there are a number of protocols with specific requirements that keep the market moving at least in the right direction. HDR10 is the most basic of these, and every HDR display worth its salt carries certification for it. This baseline specification requires 10 bits of color depth, 4:2:0 chroma subsampling or better, and the ability to display the entire Rec. 2020 color gamut. Generally speaking, most new TVs should support this standard, but aspects such as local dimming help determine just how effective a television's HDR10 efforts will be.

One game-changing protocol that's had a lasting effect on HDR adoption is known as Dolby Vision. First adopted in consumer panels by LG, Dolby Vision includes dynamic metadata that actively adjusts brightness and color across the entire screen on a scene-by-scene or even frame-by-frame basis. It's a significant upgrade to HDR10, but its adoption has been slowed by a lack of content. To utilize it, you'll need a source specifically designed to do so, and there just aren't that many movies yet released with the applicable metadata baked into their discs.

There's also HDR10+, which is similar to Dolby Vision in its use of dynamic metadata, but is an entirely different protocol developed as a competitor, and as such the two are not cross-compatible. You'll notice that high-end LG TVs all support Dolby's protocol, while Samsung's models all feature HDR10+ compatibility, because Samsung is the developer and license-holder of the technology. Other manufacturers have opted into both formats, though, which helps increase the volume of content that the average HDR-enabled high-end TV can handle.

The two aren't entirely dissimilar in their output, although HDR10+ does support significantly higher peak brightness levels than Dolby Vision. The nascent HDMI 2.1 protocol, meanwhile, has dynamic HDR metadata baked in (and the impressive bandwidth levels required to support it as well as 4:2:2 or better chroma sampling), which should only make the technology more widespread and accessible.

Then there's hybrid log gamma, or HLG, which is worth keeping in mind because it's the standard that broadcast media adheres to. Unlike HDR10+ and Dolby Vision, HLG doesn't use metadata, but combines SDR and HDR instructions into a single stream so it's compatible with all ranges of displays. This gives its stream the low bandwidth requirement that broadcast media requires while allowing properly equipped displays to enhance color and brightness as needed.

One major drawback of HLG is its inability to enhance black levels due to its lack of metadata. It should also be noted that although HLG has yet to be adopted on a large scale, quite a few modern TVs do claim to support it moving forward. But, wait, there's one more to mention. Technicolor collaborated with LG a few years ago to develop Advanced HDR, which may have a future somewhere, although we've yet to see any major content mastered for it.

You'll also find HDR advertised on gaming consoles, and given the right TV, it can make a significant difference in the gaming experience. This enhanced gaming feature will become even more prominent with the next generation of consoles. When it comes to PC gaming, though, things are more contentious. There are very few PC monitors available with effective HDR capabilities, due in part to generally low brightness levels and a significant lack of options with local dimming.

Furthermore, Windows 10 and its often finicky interactions with GPUs can throw a monkey wrench in to the implementation of HDR across the board. Some gamers report a significant increase in visual fidelity, while others claim that many titles look noticeably worse with this supposedly high-end feature enabled. This may well be the result of the PC hardware market being infinitely more fragmented than that of consoles; it's everyone's hope that upcoming GPUs will feature HDMI 2.1, and that PC monitors will get better or HDR TVs will come down significantly in price, as the combination of those factors would go a long way in making HDR a worthwhile consideration for more PC users.

Should I buy an HDR TV?

For starters, you'll have a hard time finding a TV that doesn't advertise HDR compatibility. Of course, for the reasons outlined above, this doesn't in any way tell the whole story. Edge-lit TVs and any that don't have a meaningful number of local dimming zones can claim they support HDR, but their dynamic range simply won't be enough to make it a worthwhile part of the experience.

One option is to look for VESA DisplayHDR certification. This is really more of a marketing campagin designed to sell more TVs, but it is also a good jumping-off point when looking for the right TV or monitor for your setup. The number that accompanies a product's certification (DisplayHDR 600, 1000, 1600, etc.) gives a rough estimate of the unit's peak brightness in nits in addition to specifications like bit depth and color gamut. The list of such officially approved displays is growing ever longer.

The truth is that despite the massive hype in the industry surrounding HDR, it isn't quite as powerful as it's made out to be. This is partially due to a lack of content, though, and similar to the adoption of 4K, once it takes off, it's likely there will be no looking back.

With that in mind, if you set out for the premium entertainment experience, HDR may ultimately be a prominent part of your decision-making process. If you're ready to make a big investment, and you know what features to look out for and what to expect, pulling the trigger on a high-end LCD or OLED could well prove a good decision. If you're on the fence at the moment and not afraid to upgrade in a year or two, you might consider waiting it out for Mini-LED or, better yet, microLED sets to hit the consumer space.

As of this moment, there are mixed results with HDR across the board, but the entire experience is getting better with just about every TV released, and the future is incredibly promising for this advanced technology. With the right lighting, constant increases in hardware capabilities, and sources properly mastered for HDR, this technology will undoubtedly be a serious feature to consider when looking at a new display for many years to come.