With 2 billion members, Facebook is far and away the world's largest social network and media site. But with so much content being uploaded every second, dealing with potentially harmful material becomes not only exponentially harder to accomplish but also more important as its worldwide audience grows. According to the Guardian's report on what are known as "The Facebook Files", there are so many reports that Facebook's moderators have around 10 seconds to make a decision on each one. The internal guidelines obtained by the Guardian shed light on the rules the company uses to govern content removal, but also raises questions of ethical responsibilities.

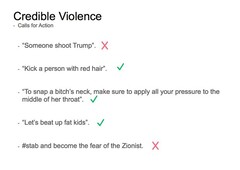

In terms of threats of violence, the documents show that generalized threats, such as "let's beat up fat kids", are allowed, because they lack the credibility due to absence of specific details. In contrast, "#stab and become the fear of the Zionist" is viewed as a credible part of a plot, rather than a simple expression of anger or frustration.

Facebook's guidelines on imagery violence is somewhat more opaque: videos of deaths are marked "disturbing" and hidden from minors, but are not automatically deleted as they can lead to increased awareness of issues. Livestreamed attempts of "self-harm" are allowed, because they don't want to "censor or punish people in distress", according to the files. Imagery or videos of animal abuse are also allowed, with "extremely disturbing imagery" marked with a warning before viewing. For example, if an animal is being tortured, then the photo can be flagged as disturbing, but left up in order to "raise awareness".

Facebook faces criticism from many countries regarding responsibility for the content posted by its 2 billion users. Lawmakers in the US and the UK have frequently criticized the media giant for explicit content, while other countries, such as Thailand, force Facebook to censor posts about political issues on penalty of losing access to the market.