Centaur Technology, a major contributor to the MLPerf initiative, has introduced the industry's first server-class, high-performance SoC with an integrated AI co-processor. The SoC features eight x86 cores and an AI co-processor codenamed NCORE that is capable of 20 TOPS performance for AI inference applications.

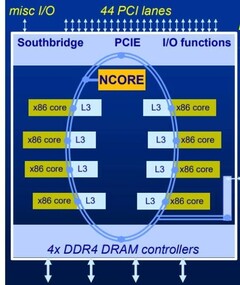

The complete technical details of the Centaur processor are still awaited. However, Centaur released some of them to get feedback from the customers and partners. According to Centaur's technical presentation, the reference platform is a 16nm 195mm2 part fabbed by TSMC and is clocked at 2.5 GHz. There are eight x86 cores each with 16 MB L3 cache and they work together to deliver 20 TB/s to the 20 TOPS AI co-processor. The SoC also offers 44 PCIe lanes and four channels of DDR4-3200 RAM, and the CPU is designed to process AVX-512 instructions with high IPC.

Centaur said it has submitted audited preview scores to MLPerf to demonstrate the capabilities of this processor. The NCORE AI co-processor was shown to have an inference capability equivalent to that of 23 high-end CPUs from "other x86 vendors", which included the Intel Xeon Platinum 8163, HanGuang 800, Intel Xeon Silver 4116, Core i7-7820HQ, etc. The NCORE AI co-processor could classify an image in less than 330 microseconds. The NCORE AI co-processor is also scalable and can be augmented with other GPUs or AI accelerators.

Centaur may have competition from the likes of the Tachyum Prodigy processor that aims to do way with the traditional heterogeneous system architecture (HSA). A prototype system based on Centaur's new SoC will be demonstrated at the International Security Conference and Exposition (ISC) East in New York on November 20 and 21. It remains to be seen if and how these technologies get distilled for consumer applications in the future.