Qualcomm has launched a new AI Hub for developers looking to leverage the power of on-device large language models (LLMs) compatible with a number of its Snapdragon SoCs. All of the models have been tested and validated by Qualcomm and include mobile, Windows on Arm and IoT-compatible versions of models including Stable Diffusion, Whisper, ControlNet, Baichuan 7B, LlaMA 2 and LLaVA.

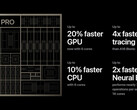

LLaVA is a large multimodal model (LLM) that can accept both text and visual user inputs and runs at over 7 billion parameters. This means it is supported on-device by the Snapdragon 8 Gen 3 which is capable of handling models up to 10 billion parameters, while the Snapdragon 8 Gen 2 tops out with support for models running at up to 7 billion parameters. As a point of comparison, Google’s Gemini Nano comes in two variants, one running at 1.8 billion parameters and the other running at up to 3.6 billion parameters.

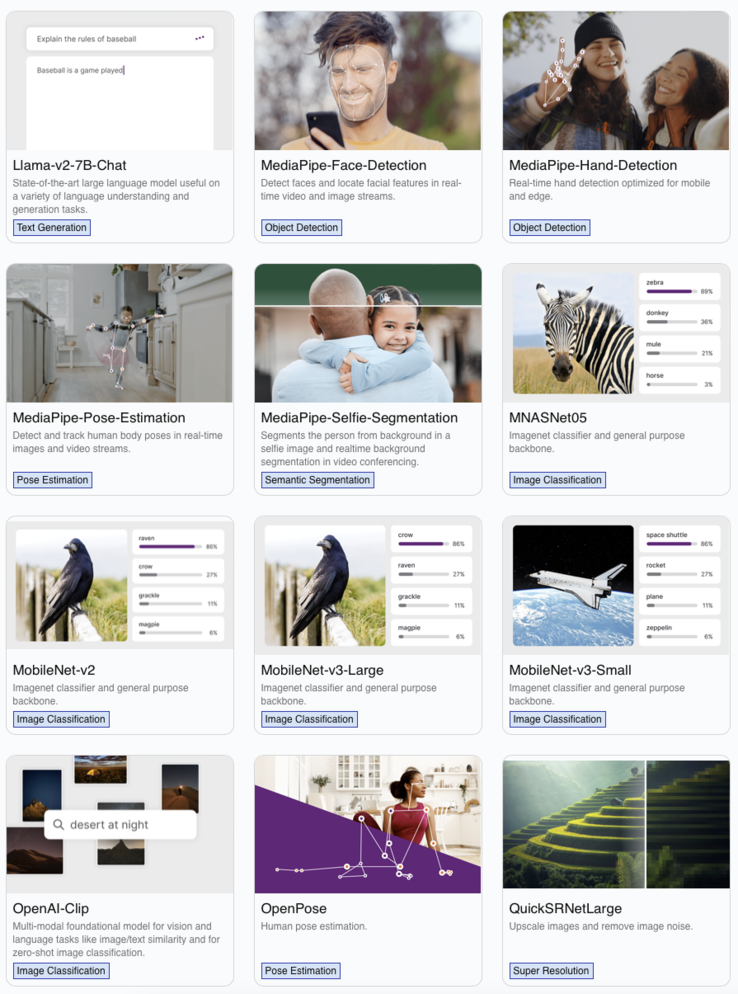

The Qualcomm AI Hub offers developers a range of tools for accelerating the development of AI applications based on the over 75 AI models currently available on the platform. This includes training programs, tutorials and documentation to optimize apps that lean heavily on machine learning, deep learning or computer vision. With the generative AI revolution well under way and the race to incorporate the technology as quickly and as widely as possible, Qualcomm is not wasting any time arming itself for the battle.

Purchase the Samsung Galaxy S24 with Snapdragon 8 Gen 3 from Amazon.