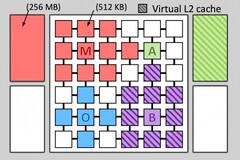

CPU makers these days try to improve performance by cramming as many cores as they can in one chip. The old CPU tiered memory caches are still there, but the technology behind them has not seen any major improvement in decades. The CPU memory caches can significantly speed up applications by fetching and storing commonly used data. Even if today’s CPUs integrate up to four cache levels, neither of these levels in particularly suited for a specific application. MIT’s Computer Science and A.I. Laboratory has just come up with a solution named Jenga that reallocates cache access on the fly in order to create “cache hierarchies” specifically tailored for any one program.

Jenga includes a map of the physical locations of each cache memory bank, so it can calculate how to store data with reduced lag. The new cache system can efficiently redistribute physical memory resources to build application-specific hierarchies and maximize the performance for any particular application.

MIT ran Jenga on a simulated 36-core CPU and the performance increase was up to 30%, while the CPU used up to 85% less power. This could greatly benefit mobile devices, such as notebooks and smartphones, where a reduced total dissipated power (TDP) is very important.

Even though Jenga is still in its simulation phase, the technology could inspire CPU makers like Intel and Qualcomm to come up with similar systems as soon as they bump into the physical limits of the ever-shrinking manufacturing process.

Loading Comments

I first stepped into the wondrous IT&C world when I was around seven years old. I was instantly fascinated by computerized graphics, whether they were from games or 3D applications like 3D Max. I'm also an avid reader of science fiction, an astrophysics aficionado, and a crypto geek. I started writing PC-related articles for Softpedia and a few blogs back in 2006. I joined the Notebookcheck team in the summer of 2017 and am currently a senior tech writer mostly covering processor, GPU, and laptop news.

> Expert Reviews and News on Laptops, Smartphones and Tech Innovations > News > News Archive > Newsarchive 2017 07 > On the fly hierarchical caches could speed up CPUs

Bogdan Solca, 2017-07-11 (Update: 2026-02-18)