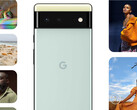

Google pioneered computational photography on smartphones and it has delivered industry-leading still image quality. The company has continued to develop this aspect with its latest Pixel 6 and Pixel 6 Pro cameras and the development of what it is calling inclusive camera technology. The company felt compelled to address what has been identified as inadvertent racial bias in smartphone cameras where the skin tones of people of color can look washed out.

The company believes that its latest computational photography techniques makes the Pixel 6 and Pixel 6 Pro stand out from the crowded field of great smartphone cameras by being able to much accurately and naturally capture the skin tones of people of color. According to Google, it ‘radically diversified’ the images that train its face detector to detect “more diverse faces in a wider array of lighting conditions. This has resulted in images that correct for the stray light that can wash out darker skin tones.

As you can see from the two sample images of the author embedded below the article, Google appears to have delivered on its promise. The left image is shot with the Pixel 6 11.1 MP front facing camera while the right image is taken with the 12 MP iPhone 13 Pro Max front facing camera. The difference between the two photos is quite stark. Not only is the Pixel image devoid of the stray light that makes the iPhone image look washed out, it also uses machine learning in the Tensor chip to sharpen the face using motion metering.

Given how Apple has adopted and promotes racially inclusive policies in many different areas inwardly and publicly, it will be very interesting to see how it responds. Will Apple be able to add this capability to the iPhone 13 series as a software update, or will users need to wait it out for the iPhone 14 series expected in October next year?

Source(s)

Own.