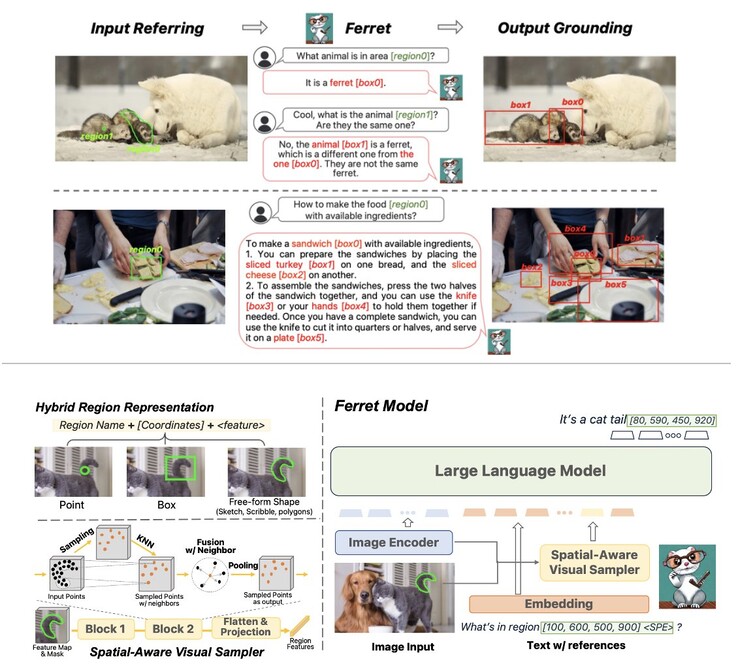

Apple quietly released its first multimodal Large Language Model (LLM) AI as an open source project, which it has dubbed Ferret. The new Ferret AI was introduced in October by Apple AI researcher Zhe Gan via X/Twitter, but went largely unnoticed until now. Ferret was jointly developed between Gan and his colleagues at Apple, along with researchers at Columbia University. According to Gan, Ferret is more precise at understanding small image regions and describing them than OpenAI’s GPT-4 while producing fewer hallucinations (errors).

Interestingly, Apple’s Github repository reveals that the company trained Ferret using 8 high-end Nvidia A100 GPUs equipped with 80GB of HBM2e RAM. The A100 is the most in-demand GPU on the market following the explosion of generative AI technology that followed the launch of OpenAI’s ChatGPT late last year. It is capable of 312 TeraFLOPS at Tensor Float 32 precision with the 80GB model used by Apple to train Ferret delivering a bandwidth of up to 2,039 GB/s. The company doesn’t, however, reveal the subject matter it used to train the new model.

While Apple is still in the relatively early stages of its generative AI journey with Ferret, the aim will be to get a model such as Ferret working effectively on a smartphone. OpenAI’s GPT4 is thought to have in excess of 1 trillion parameters, but mobile phones can currently only handle LLMs with around 10 billion parameters. To this end, Apple researchers have also recently made a breakthrough demonstrating how to supplement smartphone RAM with onboard flash storage to shoehorn larger models than might otherwise be possible to run on-device.