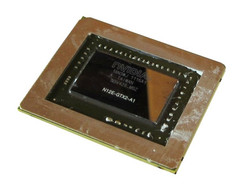

Review Nvidia GeForce GTX 580M Graphic Card

Gamers who want to enjoy the latest games in high resolutions and maximum details need to dig deep in their wallets to pay for the expensive high-end graphic cards. In the past few months, the Radeon HD 6970M and Nvidia's GeForce GTX 485M have fiercely fought for the first place. Nvidia offers a slightly better picture quality and a higher performance, and AMD offers a very good price-to-performance ratio.

Of course, Nvidia does not intend to sit on its laurels just because it is in the lead. The release of the new GeForce GTX 580M is proof of this. The latest graphic card is not all that different from the older GeForce GTX 485M - the clock frequencies have been raised slightly and a few other upgrades have been made. So to see what it was capable of, we pushed the GeForce GTX 580M through our benchmark parcours. In this review, we will reveal what new features the graphic card has to offer and how well it performs against its predecessor, and, of course, against its AMD competition.

The Test System

We used the 15.6 inch Schenker XMG P501 to test out the GeForce GTX 580M. We also used this system for a Sandy-Bridge comparison. We could easily swap out the GeForce GTX 560M (see test) for the GeForce GTX 580M, thanks to the great design of the Clevo P150HM barebone.

Of course, we needed a high-end CPU for a high-end graphic card like this, so we used a fast quad-core Intel processor, namely the Core i7-2720QM (clock frequency: 2.2 to 3.3 GHz). The rest of the installed hardware was also good: 8 GB DDR3 RAM, a small 80 GB SSD and a big 750 GB HDD. Overall, great hardware for a serious GPU test.

Schenker recommended the use of the brand-new Nvidia ForceWare 275.50 graphic card driver for our test, which at the time was still in beta testing. In our test, the driver seemed to work well with the GeForce GTX 580M, and there were no serious problems, such as graphic errors or crashes during any of the normal benchmarks.

Test Configuration:

- Windows 7 Home Premium 64 Bit

- Intel HM65 Chipset

- Intel Core i7-2720QM

- GeForce GTX 580M (2048 MB GDDR5-VRAM)

- 15.6“ Full-HD LED-Display (Glare)

- 8 GB DDR3-RAM (1333 MHz)

- Intel SSDSA2CW080G3 (80 GB)

- Seagate ST9750420AS (750 GB, 7200 U/Min)

- Price: starting from 979 Euros (price may vary depending on the configuration of the device)

Links:

- News: Announcement - GeForce GTX 570M & GTX 580M

- Test: Schenker XMG P501 PRO

- Test: GeForce GTX 485M

- Test: Radeon HD 6970M

Technology

A closer look at the specifications reveals that the GeForce GTX 580M is the further development of the GeForce GTX 485M. Both graphic cards use the latest 40 nm technology (graphic cards with 28 nm structural width are expected in 2012). They both have a large 2048 MB GDDR5 memory with a clock frequency of 1500 MHz and a big 256 bit interface.

The main differences between the two graphic cards are the clock frequencies of the core and the shader (both cards have 384 shader units). The GeForce GTX 580M has 8% higher clock speeds than the GeForce GTX 485M: 620/1240 MHz and 575/1150 MHz respectively. Thanks to the power optimized GF114 chip (GTX 485M: GF104-Chip) the power usage of the 580M should remain in the same region as that of the 485M despite the higher performance.

Both graphic cards have the same clock frequencies when the GeForce GTX 580M is idle. So when the system has no load the GeForce GTX 580M runs at a conservative 50/101/135 MHz (see screenshot). As soon, as the computer starts processing a task, the clock speeds rise to 73/147/324 MHz. Under heavy load, the afore-mentioned clock speeds were reached and during our stress test we did not note any GPU throttling. We will take a look at the clock frequencies of the graphic card while playing HD material later on in this review.

Features

Time to take a look at why Nvidia is the leading graphic card manufacturer in the world. The following are a few of the most important features offered by the Nvidia graphic cards:

• DirectX 11: Gamers absolutely need support for the latest DirectX version (Nvidia offers DX 11 support which includes Shader Model 5.0). DirectX 11 is necessary as more and more games nowadays are being visually upgraded with modern effects (such as, tessellation) either natively or via a patch.

• PhysX: Nvidia has delivered a unique feature for buyers with its PhysX technology. PhysX alleviates the CPU load by processing and storing physical calculations on the graphic card. Sadly, few games use this feature to the full extent possible, but it is a nice extra.

• 3D Vision: A 120 Hz monitor and a 3D Vision Kit combined with a compatible Nvidia graphic card transform the mundane 2D images on the PC into a realistic 3D experience. 3D Vision requires the proper configuration before the user can really enjoy the effect and even then, as revealed in our test, there are a few problems. The technology drops the performance in most games by 50% and the shutter glasses, which can be annoying to wear for a long time, block a lot of the brightness of the display. Nevertheless, Nvidia is still in the lead compared to its AMD competition.

• Optimus: This revolutionary technology allowed notebook manufacturers to equip their laptops with automatic graphics switching. This means that when a program is launched the system will decide whether it should use the dedicated graphic card (higher performance, higher power consumption) or the integrated graphic chip (lower performance, lower power consumption). Thus, Optimus allows notebooks to strike a fine balance between energy usage and performance, and it is unfortunate that few high-end devices employ this technology.

• SLI: Two compatible graphic cards or more can be connected via a small interface to boost performance using the SLI technology. SLI is usually found in desktop computers, and rarely seen in notebooks. This is due to the various problematic issues raised by an SLI system, such as: high power consumption, price, cooling requirement and dependence on driver, not to mention the so-called micro-stutters.

• CUDA: CUDA, DirectCompute 2.1 and OpenCL are all technologies important for professionals, and as such of little interest to gamers. One practical use of this technology for consumers would be the faster encoding of HD videos.

• PureVideo HD: This allows the decoding of HD videos using the graphic card. Of course, this feature is available with the GeForce GTX 580M. The graphic card uses its VP4-VideoProcessor to decode videos with formats such as H.264, VC-1/WMV9 as well as MPEG-1, -2 and -4 directly, thereby, reducing the load on the CPU.

• Audio Bitstreaming: HD audio can be converted into Dolby TrueHD and DTS-HD using this feature - something for the home theater fans.

• HDMI 1.4a: Thanks to the latest HDMI interface, the user can deliver 7.1 surround sound and 3D material via the interface to external monitors or televisions.

Benchmarks

We decided to concentrate on benchmarks which stressed the DirectX 11 feature, as gamers will be primarily interested in this. The results below were recorded using the Clevo P150HM barebone (Schenker XMG P501 or Eurocom Racer) and were all processed using the same or a similarly fast quad-core CPU. To view more details please see our graphic card benchmark comparison here.

3DMark 11

The GeForce GTX 580M took the first place in the latest 3DMark benchmark (1280 x 720, Performance Preset) with a score of 3110. The Radeon HD 6970M scored 10% less (2834 points) and the GeForce GTX 485M scored 17% less (2668 points). The cheaper GeForce GTX 560M was the lowest with a score of 1812 points (-42%).

Unigine Heaven 2.1

The extensive Unigine Heaven 2.1 benchmark (1280 x 1024, normal tessellation) was received well by the Nvidia graphic cards due to their stronger tessellation performance. While the Radeon HD 6970M scored 33.2 fps, the GeForce GTX 485M delivered 40.9 fps (+23%) and the GeForce GTX 580M gave an even better 44.3 fps (+33%). The GeForce GTX 560M is left in the dust with a score of 27.7 fps (-37%).

Gaming Performance

Most of the graphic and game benchmarks were run on the P150HM barebone. Unless otherwise noted, the GeForce GTX 485M was run on the Schenker XMG P501 (Core i7-2630QM or Core i7-2920XM) and the Radeon HD 6970M on the Eurocom Racer (Core i7-2720QM). High graphic settings were necessary to minimize the influence of the CPU.

Dirt 3

The beautiful racing game from the Codemasters studio ran fluidly on the GeForce GTX 580M. The integrated benchmark ran well at 44.2 fps (around one minute of snow racing) even with "Ultra" details and a 1920 x 1080 resolution. 44.2 fps is also the highest result we have measured so far using a single graphic card.

These settings cause the game to stutter on the GeForce GTX 560M (23.0 fps - which is half the score of the 580M). The Radeon HD 6970M was also at its limits. In our test with the Alienware M18x (Core i7-2920XM), the graphic card delivered 33.2 fps. We still lack a result for the GeForce GTX 485M.

| Dirt 3 | |||

| Resolution | Settings | Value | |

| 1920x1080 | Ultra Preset, 4xAA, -AF | 44.2 fps | |

| 1360x768 | High Preset, 2xAA, -AF | 126.4 fps | |

| 1024x768 | Medium Preset, 0xAA, -AF | 143.9 fps | |

| 800x600 | Ultra Low Preset, 0xAA, -AF | 198 fps | |

Crysis 2

The punctual DirectX 11 update for the ego shooter Crysis 2 (we use DirectX 9 version 1.2) really strains the GeForce GTX 580M. The opening scene (U-boat) of the game was not displayed fluidly at a resolution of 1920 x 1080 pixels and a details setting of "Extreme". The result of 34.1 fps is too little for a First Person Shooter game.

GeForce GTX 485M and Radeon HD 6970M were hit even harder: both graphic cards delivered around 30 fps in the test of the Schenker XMG U700 ULTRA (Core i7-990X). In comparison: the GeForce GTX 560M only managed 20 fps. The setting "Very High" allowed the GeForce GTX 580M to deliver a decent experience even in the Full-HD resolution.

| Crysis 2 | |||

| Resolution | Settings | Value | |

| 1920x1080 | Extreme | 34.1 fps | |

| 1366x768 | Very High | 100 fps | |

| 1024x768 | High | 133.4 fps | |

| 800x600 | High | 189.1 fps | |

Call of Duty: Black Ops

The aging Modern Warfare engine was no problem for the GeForce GTX 580M. The user can comfortably raise all the graphic settings to maximum. The game ran at an excellent 87.3 fps with a resolution of 1920 x 1080 pixels, "Extra" details, 4x anti-aliasing and 8x anisotropic filtering. The GeForce GTX 560M lay behind with a result of 64.5 fps (around -25%).

The results of the GeForce GTX 485M (74.2 fps) and the Radeon HD 6970M (74.5 fps) are not directly comparable due to the activated fps limit (max. 85 frames per second). In the benchmark we ran through the starting scene where the player is caught in a gunfight with the Cuban police.

| Call of Duty: Black Ops | |||

| Resolution | Settings | Value | |

| 1920x1080 | extra, 4xAA, 8xAF | 87.3 fps | |

| 1360x768 | high, 2xAA, 4xAF | 111.3 fps | |

| 1024x768 | medium, 0xAA, 0xAF | 114 fps | |

| 800x600 | low (all off), 0xAA, 0xAF | 147 fps | |

Mafia 2

The high-end graphic card stays in the lead with an average score of 65.6 fps. The GeForce GTX 485M (59.2 fps) and the Radeon HD 6970M (55.9 fps) are still behind. The GeForce GTX 560M delivers slightly more than 40 fps which is around one-third less. Still, Mafia 2 manages to run fluidly on all cards.

| Mafia 2 | |||

| Resolution | Settings | Value | |

| 1920x1080 | high, 0xAA, 16xAF | 65.6 fps | |

| 1360x768 | high, 0xAA, 16xAF | 101 fps | |

| 1024x768 | medium, 0xAA, 8xAF | 116.2 fps | |

| 800x600 | low, 0xAA, 0xAF | 139.5 fps | |

Starcraft 2

The demanding challenge mission, "For the Swarm", did not manage to bring the GeForce GTX 580M to its knees. The graphic card delivered a good 58.8 fps, and the Radeon HD 6970M and GeForce GTX 485M performed at a similar level with a score of 58.6 and 56.5 fps respectively. As usual, the GeForce GTX 560M is far behind with our test model delivering a mere 34.7 fps.

| StarCraft 2 | |||

| Resolution | Settings | Value | |

| 1920x1080 | ultra | 58.8 fps | |

| 1360x768 | high | 100.9 fps | |

| 1360x768 | medium | 104.6 fps | |

| 1024x768 | low | 265.3 fps | |

Metro 2033

Metro 2033 was the only game besides Crysis 2 which caused problems for the graphic card at maximum settings. Very high details, DX 11 mode and a resolution of 1920 x 1080 are too much for the GeForce GTX 580M. Our three minute benchmark sequence (start of the single player campaign) had such severe problems that the aggressive mutants trying to maul us were hard to shoot.

Surprisingly, the AMD Radeon HD 6970M took the lead with a slightly higher score of 18.3 fps, whereas the GeForce GTX 580M landed second with 17.6 fps and the third place went to the GeForce GTX 485M with 16.1 fps. These high settings reduced the game to a slide show on the GeForce GTX 560M (10.9 fps). Users should not expect more than DX 10 mode and high details from the GeForce GTX 580M for this game.

| Metro 2033 | |||

| Resolution | Settings | Value | |

| 1920x1080 | Very High DX11, AAA, 4xAF | 17.6 fps | |

| 1600x900 | High DX10, AAA, 4xAF | 49.6 fps | |

| 1360x768 | Normal DX10, AAA, 4xAF | 90.2 fps | |

| 800x600 | Low DX9, AAA, 4xAF | 129.1 fps | |

Battlefield: Bad Company 2

1920 x 1080 resolution, high details, 4x anti-aliasing, and 8x anisotropic filtering are all no problem for the GeForce GTX 580M which delivers a good 50.9 fps. Something the GeForce GTX 560M can only dream of with its result of 34.4 fps. The GeForce GTX 485M and the Radeon HD 6970M are hot on the heels of the GeForce GTX 580M: both graphic cards delivered 50 fps for the boat trip at the start of the single player campaign.

| Battlefield: Bad Company 2 | |||

| Resolution | Settings | Value | |

| 1920x1080 | high, HBAO on, 4xAA, 8xAF | 50.9 fps | |

| 1366x768 | high, HBAO on, 1xAA, 4xAF | 95.2 fps | |

| 1366x768 | medium, HBAO off, 1xAA, 1xAF | 137.3 fps | |

| 1024x768 | low, HBAO off, 1xAA, 1xAF | 171.2 fps | |

Risen

A game from the role-playing genre is an absolute must for our graphic card test. Although Risen is easier on graphic cards, the user will still need a high-end card to be able to play the game maximum details at a high resolution. The GeForce GTX 580M delivered on average a good 53.4 fps (Fraps) despite the high details, 4x anisotropic filtering, and a 1920 x 1080 pixel resolution.

While the GeForce GTX 485M pulls ahead (52.7 fps), the Radeon HD 6970M lags behind (46.8 fps). The GeForce GTX 560M is once again last and our beach sequence which lasted a few minutes took its toll on the card: 34.4 fps.

| Risen | |||

| Resolution | Settings | Value | |

| 1920x1080 | high/all on, 0xAA, 4xAF | 53.4 fps | |

| 1366x768 | all on/high, 4xAF | 77.1 fps | |

| 1024x768 | all on/med, 2xAF | 107.5 fps | |

| 800x600 | all off/low, 0xAF | 147.3 fps | |

Need for Speed: Shift

The GeForce GTX 580M (81.0 fps) shoots past the Radeon HD 6970M (64.6 fps) and the GeForce GTX 485M (70.7 fps) while the game is running the London circuit at high details, 4x anti-aliasing and 1920 x 1080 pixels. The performance of the GeForce GTX 560M (49.0 fps) does not even come close to the other three candidates.

| Need for Speed Shift | |||

| Resolution | Settings | Value | |

| 1920x1080 | all on/high, 4xAA, triliniarAF | 81 fps | |

| 1366x768 | all on/high, 4xAA, triliniarAF | 118 fps | |

| 1024x768 | all on/med, 2xAA, triliniarAF | 121 fps | |

Benchmark Overview

As expected, the GeForce GTX 580M fought tooth and nail to grab the highly coveted title of "highest performing mobile graphic card". Nvidia's new top model has more than enough power to fluidly run most of the latest games with high details and a Full-HD resolution on the monitor – including visual extras (anti-aliasing, anisotropic filtering, etc). Only extremely demanding games such as Metro 2033 or Crysis 2 can force the user to lower the settings so as to enjoy a fluid gaming experience.

Passionate gamers who absolutely want to play on their notebooks should definitely take a look at the GeForce GTX 580M. Overall, the top model trumps the GeForce GTX 485M by around 9% and the Radeon HD 6970M by about 13% (1920 x 1080, Ultra settings). The GeForce GTX 560M loses by a stretch as the GeForce GTX 580M delivers on average up to 59% more performance. To see the other game benchmarks which we ran but have not mentioned in this review, please look at this detailed game comparison list.

| low | med. | high | ultra | |

|---|---|---|---|---|

| Need for Speed Shift (2009) | 121 | 118 | 81 | |

| Resident Evil 5 (2009) | 161.6 | 114.1 | 90.6 | |

| Risen (2009) | 147.3 | 107.5 | 77.1 | 53.4 |

| CoD Modern Warfare 2 (2009) | 232.9 | 132.3 | 108.7 | 77.8 |

| Battlefield: Bad Company 2 (2010) | 171.2 | 137.3 | 95.2 | 50.9 |

| Metro 2033 (2010) | 129.1 | 90.2 | 49.6 | 17.6 |

| StarCraft 2 (2010) | 265.3 | 104.6 | 100.9 | 58.8 |

| Mafia 2 (2010) | 139.5 | 116.2 | 101 | 65.6 |

| Fifa 11 (2010) | 502 | 406.1 | 322.2 | 216.6 |

| Call of Duty: Black Ops (2010) | 147 | 114 | 111.3 | 87.3 |

| Crysis 2 (2011) | 189.1 | 133.4 | 100 | 34.1 |

| Dirt 3 (2011) | 198 | 143.9 | 126.4 | 44.2 |

Video Acceleration

The GeForce GTX 580M performs flawlessly while decoding HD videos. Regardless of the format (H.264-, VC-1- or Flash), the clock frequency stays at the 2D standard of 73 (core), 147 (shader) and 324 MHz (memory). The processor had barely any work to do during this process and the CPU load ranged between 0 to 1% - exemplary. Only Flash videos could increase the CPU load to 5%.

Power Consumption

The high temperature emissions, which will require an extensive cooling system, and the high power consumption are the biggest weaknesses of the GeForce GTX 580M. The high energy usage is especially noticeable when the graphic card is under load. For example, during our test with XMG P501, the GeForce GTX 485M consumed between 125.1 and 180.2 W. On the other hand, our recordings from the GeForce GTX 580M ranged between 132.4 to 214.3 watts.

The calculation of the maximum value was very hard as the notebook crashed abruptly after a short period of time during our stress test (Furmark & Prime). We notified Schenker about this problem. According to the manufacturer, it is still unclear why the power consumption is so much higher than that of the GTX 485M. For example, on the X7200 barebone the difference between the power consumption of the two cards was negligible.

We do not yet know why the notebook crashed during our stress test. It could be due to a bug in the P150HM barebone, a higher-than-expected temperature (the graphic card went above 90°C) or an insufficient power supply. The 180 W power adapter delivered with the notebook was pushed to its limits during this test and in the future, Schenker might employ the 220 W power adapter of the P170HM barebone (XMG P701). Back to the topic: while idle, the GeForce GTX 580M had relatively low consumption - between 27.3 and 34.4 W. This range is perfectly acceptable for a high-end notebook.

| Off / Standby | |

| Idle | |

| Load |

|

Key:

min: | |

Battery Life

Finally, we want to shine some light on the battery life. Of course, the battery life will vary from notebook to notebook, depending on each individual configuration, and as such our measurement from the Schenker XMG P501 is only meant as a point of reference. Long trips away from a power outlet are taboo as our 15 inch device does not have graphics switching technology and the 8-cell battery (76.96 Wh) is potent but not strong enough.

Minimum display brightness and maximum energy-saving options allowed the notebook to run for two hours and 52 minutes (Readers Test by Battery Eater). Adjusting the brightness to maximum and moderate energy saving options makes the battery life fall significantly. Wireless surfing using WLAN was possible for two hours and 14 minutes. We could also watch a movie for around 2 hours and 4 minutes.

Under heavy load (Classic Test by Battery Eater), the XMG P501 lasted longer than most classic gaming notebooks (with maximum brightness and deactivated energy saving): 1 hour and 38 minutes. Overall, the GeForce GTX 580M does not grant the user extensive mobility and would be better suited for stationary devices. Note: in our test with the GeForce GTX 560M the battery life of the 15 inch laptop was barely higher.

Verdict

What is left to say about the GeForce GTX 580M? Yes, this graphic card is without a doubt the new king of performance, and, yes, thanks to its various features and the better picture quality, the graphic card can distinguish itself from its main AMD competition.

The GeForce GTX 580M increases Nvidia's lead in performance but the high price might be a problem for some buyers. However, as can be seen from Intel's best CPUs (the Extreme series), supreme performance comes at a high price. This high-end graphic card is designed for gamers with thick wallets.

Potential buyers need to check a few features before making their purchase. Users will be confronted by a high noise emission (very distracting), unless the manufacturer of the laptop invents an ingenious cooling system. The temperature emissions of the card are also very high, and the cooling system will have to turn up to the maximum to keep the graphic card temperatures from going too high.

Price-conscious gamers should look at other graphic solutions besides the GTX 580M. For example, Schenker offers the AMD Radeon HD 6970M for a very good price-to-performance ratio. So, the Nvidia GTX 580M will probably only be found in special gaming notebooks which cost more than 2,000 Euros.

Performance Rating (1920 x 1080, Ultra):

- GTX 580M vs. GTX 485M = +9%

- GTX 580M vs. HD 6970M = +13%

- GTX 580M vs. GTX 560M = +59%

+ DirectX 11 Support

+ Many Features

- High Cooling Requirements

- Very Expensive