Performance and Scaling Overview of Intel HD Graphics 4000

When talking about graphics cards most people immediately think of AMD or Nvidia - in the end, the most popular and fastest models on the market come from these two companies. What many people do not know, however, is that when judging by volume alone, integrated graphics solutions are the majority, and thus chip giant Intel is at the top of the statistics.

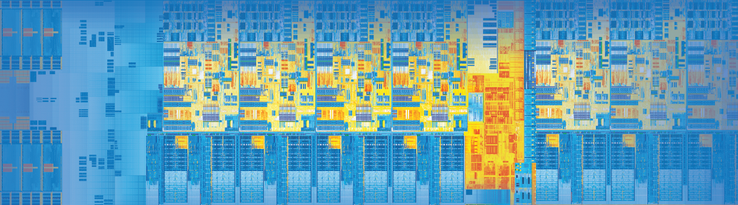

The most modern and efficient IGP by the manufacturer is the HD Graphics 4000, which is included in most processors of the Ivy Bridge generation. Even though in the graphics sector, Intel is still quite a bit behind the APUs of AMD, the HD 4000 is a big step forward, as was already shown in our benchmark article.

In this review, a general overview of the many different HD 4000 offshoots rather than the competition is taken into account. Different clock rates, cache sizes and memory expansions have considerable influence on the performance - this review will show how much influence they have.

Overview

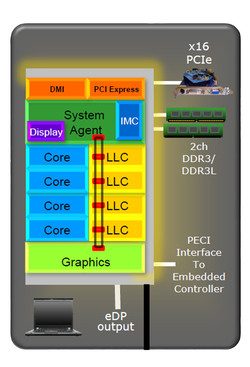

Every HD 4000 has 16 so-called Execution Units (EUs), of which the clock rate is either 350 (ULV) or 650 MHz. In 3D applications, the GPU can kick off an additional turbo boost, which raises the frequency by several hundred MHz. Though this turbo is determined by the chip temperature and the power consumption, it is usually almost constant - if under a particularly high load, the CPU turbo is limited, if necessary. With the models currently available, the maximum GPU turbo is between 1000 (Core i3-3110M) and 1350 MHz (Core i7-3940XM).

Though Intel has provided the graphics unit with its own L3 cache (256 KB), the HD 4000 can also use the big L3 cache of the CPU. It would be correct to call this one a "Last Level Cache" (LLC); however, in the following paragraph, the more common name will be used. It is either 3 or 4 MB with the dual core models and 6 or 8 MB with the quad core models and serves in reducing the rather slow access to the main memory.

The storage connection has always been one of the biggest bottlenecks of any built-in graphics card. While dedicated high end GPUs normally have a fast GDDR5-VRAM in an interface of a width of at least 256 Bit, the HD 4000 only has the significantly slower main memory. In plain language, this means a maximum of two 64-Bit-channels with 800 MHz clock rate (DDR3-1600), which are shared between the GPU and the processor. Furthermore, some notebooks only have one memory module and/or slower modules, which further decreases the bandwidth.

The Test System

For our assessments, the same test system was used as in our latest CPU article. The test device was the One M73-2N (MSI MS-1762 Barebone), which thanks to an efficient cooling system allows full leverage of the turbo boost range.

In order not to distort measurements with reload freezing, a fast Samsung SSD with 128 GB memory as well as 8 GB DDR3-RAM was used. Only the bandwidth test was partly carried out with 4 GB, although in this case it had no adverse affects on the performance.

Test configuration:

- Intel Ivy-Bridge CPUs

- HD Graphics 4000 (8.15.10.2761)

- Intel HM77 chipset

- 2x 4 GB Samsung DDR3-1600 (CL11)

- 1x 4 GB Kingston DDR3-1333 (CL9)

- Samsung PM830 SSD (128 GB)

- 180 watts power supply unit

- Windows 7 Professional 64 Bit

| Name | Cores/Threads | CPU clock rate | L3 cache | TDP | GPU clock rate |

|---|---|---|---|---|---|

| Core i7-3940XM | 4/8 | 3.0 - 3.9 GHz | 8 MB | 55 W | 650-1350 MHz |

| Core i7-3840QM | 4/8 | 2.8 - 3.8 GHz | 8 MB | 45 W | 650-1300 MHz |

| Core i7-3740QM | 4/8 | 2.7 - 3.7 GHz | 6 MB | 45 W | 650-1300 MHz |

| Core i7-3630QM | 4/8 | 2.4 - 3.4 GHz | 6 MB | 45 W | 650-1150 MHz |

| Core i7-3610QM | 4/8 | 2.3 - 3.3 GHz | 6 MB | 45 W | 650-1100 MHz |

| Core i5-3210M | 2/4 | 2.5 - 3.1 GHz | 3 MB | 35 W | 650-1100 MHz |

Benchmarks

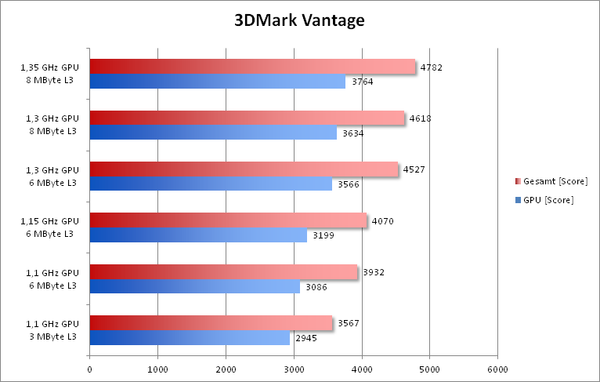

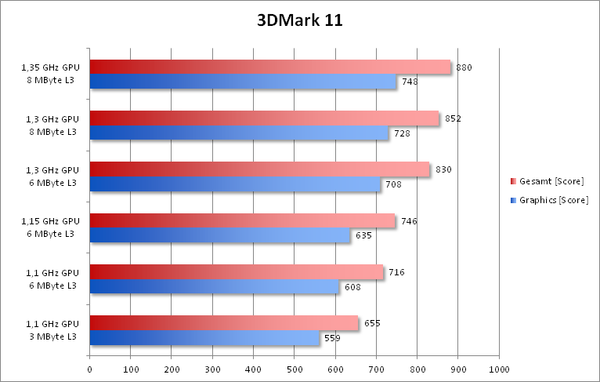

Due to the operating principle, two undesirable factors could not be avoided in the two following benchmarks. On the one hand, the processor performance varies considerably between the CPUs. However, due to the strong GPU limitation, this should only have a secondary influence on the performance. Furthermore, different L3 clock rates could not be avoided, as they are linked to the processor clock. Nevertheless, this source of disturbance should not be very grave either.

The difference between the fastest and the slowest HD 4000 level in 3DMark Vantage (GPU score) is 28% - 5% more than with the alternate clock rate. The influence of the L3 cache size therefore is relatively small: there is a difference of about 5% between 3 and 6 MB. Another 2 MB increases the performance by only 2%.

The 3DMark 11, in contrast, is much more sensitive to the size of the cache. With just under 9% (3 vs. 6 MB) and 3% (6 vs. 8 MB) respectively, the L3 cache has a significant influence on the performance. It is also interesting that higher clock rates with the same cache size are converted to an increased performance in an almost linear way. This implies that the memory bandwidth - at least with DDR3-1600 in dual-channel mode - is hardly a limiting factor.

To verify the results of a 3DMark test in a real 3D game, we played the Action-RPG Deus Ex: Human Revolution. It provides very constant framerates and is therefore ideal for our benchmarks, which measure differences of only a few percent.

By and large, the results resemble those of the 3DMark 11. The step from a 3 to a 6 MB L3 cache as well as the clock rate especially influence the performance.

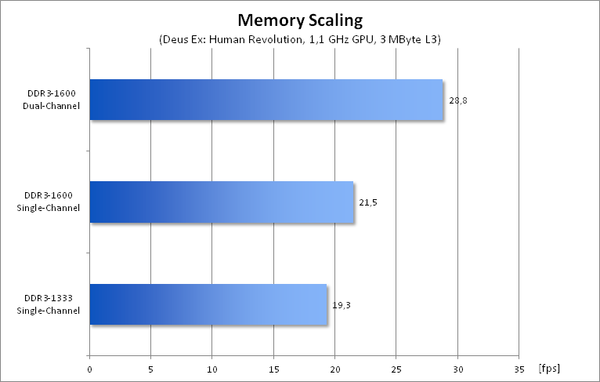

All measurements up to this point were carried out with the fastest possible storage configuration - but what if the notebook comes with less ideal equipment? Cheap notebooks often have only one storage module or a slow DDR3-1333. We examined these two cases with the slowest model of our test, the Core i5-3210M.

The losses in performance caused by a lower memory bandwidth are quite drastic: while Deus Ex with DDR3-1600 in dual channel mode still runs quite smoothly at 29 fps, the framerate with DDR3-1333 in single channel mode drops to only 19 fps.

The 20% higher bandwidth of DDR3-1600 vs. DDR3-1333 (both single channel) results in a performance which is a good 11% higher. A second storage module leads to a 34% performance increase.

Verdict

As our short test has shown, there is no general answer to the question how fast the Intel HD Graphics 4000 is.

The storage connection has the most important influence on the performance. The maximum performance may only be achieved with a fast dual-channel connection, which is not always possible with ultrabooks that only have one DIMM slot.

Less significant but still important are the effects of the clock rate and the L3 cache. Those who operate a Core i7-3840QM or 3940XM without a dedicated graphics card - which is rather rare - enjoy a considerably higher 3D performance than with a core i3 or core i5 processor. The HD 4000 might even come close to a dedicated accelerator, even though the absolute level of performance remains very low.

We look forward to future developments, as "Kaveri" of AMD and "Haswell" of Intel promise another performance boost in the sector of integrated graphics solutions. The influence of additional caches and faster storage solutions should be even greater with these chips.

We would like to conclude by thanking the company One, which made this test available. Under this link you may configure and order the M73-2N.