2013 has not been an easy year for Nvidia's SoC business: The Tegra 4 was highly praised in advance, but there were problems with delays and the first production units had issues with overheating and throttling. All that and the fact that, contrary to the competing Qualcomm Snapdragon 800, it did not have an integrated LTE modem, resulted in modest sales figures and a drastic drop in revenues of the according business segment. Even though the absolute performance of the Tegra 4 is still pretty good, it is about time for the – hopefully more successful – successor.

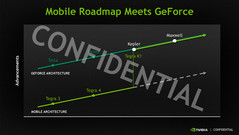

This successor with the codename "Logan" will be launched as Tegra K1 (formerly Tegra 5). More technical information and a performance assessment can be found in our database:

Let's have a quick look at the main differences compared to the predecessor from last year:

| Designation | Tegra 4 ("Wayne") | Tegra K1 ("Logan") |

|---|---|---|

| CPU Architecture | Cortex-A15 ARMv7 | Cortex-A15 "r3" ARMv7 or Denver ARMv8 |

| Number of Cores | 4-plus-1 Quad-Core | 4-plus-1 Quad-Core or Dual-Core |

| CPU Clock | 1.8 up to 1.9 GHz | up to 2.3 GHz or up to 2.5 GHz |

| Shader Units | 48 Pixel + 24 Vertex Shader | 192 Unified Shader |

| GPU Clock | up to 672 MHz | up to 950 MHz (?) |

| Memory Support | DDR3L + LPDDR2/3 up to 1,866 MHz | DDR3L + LPDDR2/3 up to 2,133 MHz |

| Integrated Modem | no | no |

| Manufacturing Process | TSMC 28-nanometer HPL | TSMC 28-nanometer HPM |

At first, Nvidia still uses a quad-core chip with an additional companion core (4-plus-1) to execute simple tasks and improve the energy consumption. Based on the Cortex-A15 architecture from ARM, the cores are now from the improved r3-revision and promise higher clocks of up to 2.3 GHz as well as a better energy efficiency.

In the second half of 2014 a second version of Tegra K1 is planned to arrived. In this pin compatible chip, the CPU cores are exchanged with own developed Denver cores. Instead of the 4+1 32-Bit Cortex-A15 cores, two 64-Bit cores are used. However, up to now we got nearly no information on the cores, as they were just announced yesterday at the CES 2014 keynote by NVIDIA.

| Cortex A15 | Denver |

|---|---|

| 4+1 Quad-Core + Companion Core | Dual Core |

| 32-Bit | 64-Bit |

| 3-way Superscalar | 7-way Superscalar |

| Up to 2.3 GHz | Up to 2.5 GHz |

| 32K+32K L1 | 128K+64K L1 |

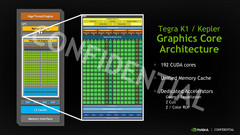

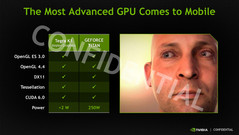

A much bigger improvement is the GPU. It is the first time that a Tegra SoC uses a modern Unified Shader design from the Kepler generation. The Tegra K1 does only have 192 CUDA cores, which is roughly half of the number in current notebook GPUs like the GK208 and GK107, but that should still result in a significant performance bump in smartphones and tablets. According to Nvidia, a first reference tablet managed impressive 60 fps in GFXBench 2.7 (T-Rex Offscreen) – even current high-end chips like Apple's A7 (27 fps) or Qualcomm's Snapdragon 800 (24 fps) cannot keep up with that.

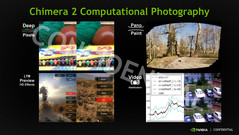

The extensive set of features is also impressive: OpenGL ES 3.0, OpenGL 4.4 and DirectX 11 are only a few of the new additions. The enormous performance can also be used for video and picture effects via GPGPU. Nvidia groups the latter under the term "Chimera 2" and demonstrated the real-time implementation of Tone Mapping (HDR photography).

That leaves the question of the energy efficiency, which has been one of the problems of the unsuccessful Tegra 4. Besides the new or heavily revised GPU and CPU architectures, the improved manufacturing process – TSMC 28-nanometer HPM – is supposed to improve the energy consumption of the SoC. Nvidia thinks the Tegra K1 will be able to compete with the Apple A7 and even beat the Snapdragon 800 in this regard. However, Qualcomm is still unrivaled in one point: Even the new "Logan" does not have an integrated LTE modem.

It remains to be seen whether the impressive specifications can be confirmed in practice. There are still no news when the first devices with the Tegra K1 will launch – Nvidia should not wait too long, the competition never sleeps.

Source(s)

Nvidia Press Event